Elevating AI Gateway Security and Control for LLM Access with the Power of Agent ID

- Akindu Himan

- Engineering Intern, WSO2

- Ayesha Dissanayaka

- Associate Director/Architect , WSO2

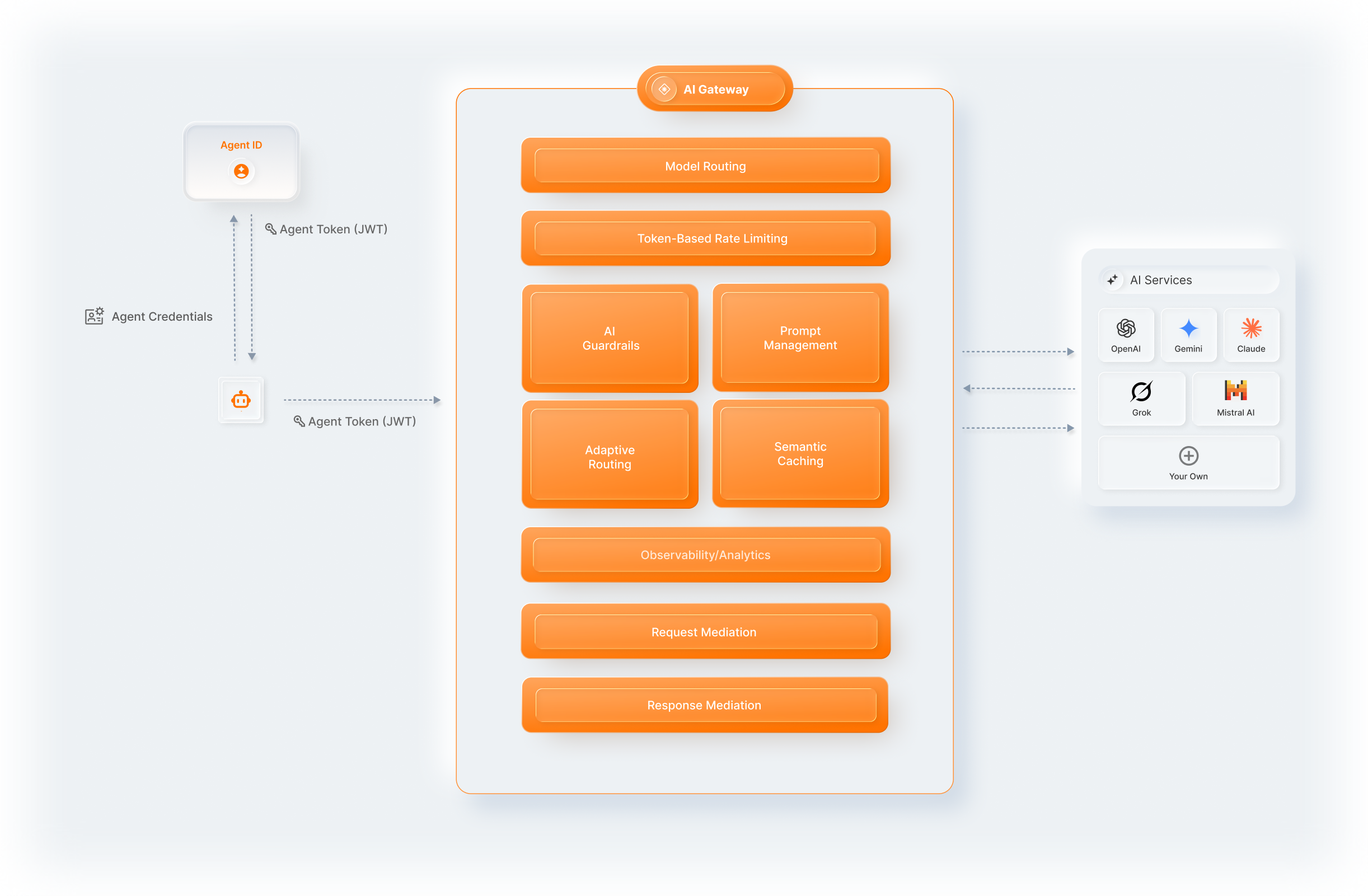

The rapid proliferation of Artificial Intelligence (AI) agents and Large Language Models (LLMs) is transforming how businesses operate. From automating customer service to generating complex reports, AI agents are becoming indispensable. However, this explosion of AI-driven interactions brings with it significant challenges in management, security, and governance. While AI gateways (a control layer that sits between AI agents and AI services, handling routing, policy enforcement, security, and observability for AI traffic) offer a crucial first line of defense, their full potential remains untapped without a fundamental piece of information: the identity of the AI agent making the request.

Current AI gateways are adept at managing LLM traffic, applying generic rate limits, and offering observability. Yet, they are often not equipped to provide granular control by themself because they lack the ability to distinguish one AI agent from another. In this article, we discuss how assigning unique identities to AI agents doesn't just improve gateway functionality in LLM traffic controlling; it fundamentally amplifies their value, enabling more intelligent, agent-specific guardrails and controls.

The evolving landscape of AI interactions

The digital realm is increasingly populated by AI agents (specialized programs designed to perform specific tasks, often interacting directly with users or other systems). These agents range from sophisticated virtual assistants to backend data processors, and their numbers are growing exponentially within enterprises. At the heart of many of these agents lies the LLM, providing the intelligence and conversational capabilities that drive their functions. The sheer volume and complexity of LLM calls generated by these agents necessitate robust governance to ensure security, manage costs, maintain compliance, optimize performance, and uphold responsible AI principles.

The foundational value of AI gateways for LLM access

Just as API gateways manage traditional API traffic, AI gateways are emerging as specialized interception points for AI traffic, controlling agent-to-LLM interactions, as well as agent-to-tool invocations. They provide essential services for governing LLM calls, including:

- Traffic routing and load balancing: Directing requests to the appropriate LLM instances.

- Rate limiting and quota management: Preventing system overload and ensuring fair usage.

- Observability and analytics: Monitoring performance and usage patterns.

- Basic security: Authenticating applications or human users making requests.

- Initial prompt injection prevention: Basic filtering of malicious inputs.

- AI trust guardrails: Detecting issues such as toxicity or hallucination in LLM responses.

Why identity matters

Standard AI gateways are adept at routing traffic and basic rate limiting. However, they lack the granular context required for sophisticated operations. Without Agent Identity, you cannot efficiently answer critical questions such as:

- Which agent is responsible for this $5,000 LLM bill?

- Why is a "Read-Only" research agent attempting to call a "Delete" function?

- Which specific agent leaked sensitive data in a prompt?

Introducing AI agent identity

This is where AI agent identity comes into play. Imagine giving each AI agent a unique digital fingerprint, a distinct identifier, and associated credentials. This unique identity, such as that provided by WSO2 Agent ID, transforms the AI gateway from a generic traffic cop into an intelligent security and governance orchestrator.

When an AI agent makes a request, it presents its unique identity. The AI gateway intercepts this request, identifies the specific agent, and then applies policies tailored precisely to that agent's role, permissions, and operational context. This shift moves beyond generic traffic rules, enabling unprecedented levels of control and accountability.

Amplifying AI gateway capabilities with agent identity

The ability to identify individual AI agents unlocks a cascade of benefits, significantly amplifying the capabilities of any AI gateway:

- Enhanced security:

- Agent-specific access control: Restrict specific agents to particular LLMs or functionalities, preventing unauthorized access.

- Fine-grained rate limiting: Apply different rate limits to high-priority vs. low-priority agents, or throttle individual agents exhibiting anomalous behavior.

- Behavioral monitoring & anomaly detection: Easily flag unusual activity from a specific agent (e.g., an agent suddenly making requests it never made before).

- Improved threat detection: Trace the source of a prompt-injection attack or data-exfiltration attempt directly to the responsible agent.

- Optimized resource management & cost control:

- Usage tracking per agent: Accurately attribute LLM costs to specific agents, teams, or departments.

- Quota management: Enforce strict usage limits for individual agents or groups, preventing budget overruns.

- Tiered access: Allow critical agents access to premium, more powerful (and expensive) LLMs, while others use more cost-effective alternatives.

- Granular policy enforcement:

- Contextual guardrails: Apply content filters, moderation rules, or data redaction policies based on an agent's specific purpose or the sensitivity of the data it handles. For instance, a customer service agent might have stricter redaction rules than an internal research agent.

- Compliance & audit trails: Simplify regulatory compliance by maintaining detailed, agent-specific audit logs of all LLM interactions.

- "Responsible AI" enforcement: Ensure agents adhere to ethical guidelines and operational boundaries set for their specific functions.

- Constrained tool calling:

- Identity-based scoping: Even if an LLM suggests an action, the gateway blocks the execution if that specific agent ID isn't authorized for that tool.

- Dynamic prompt redaction: The gateway can strip "dangerous" tool definitions from the system prompt before it reaches the LLM, removing the possibility of misuse at the source.

- Zero-trust parameters: Validate the arguments of a tool call—for instance, ensuring a data-export tool only sends files to pre-approved internal IP addresses.

- Improved observability and debugging:

- Agent-specific logging: Quickly pinpoint performance bottlenecks or errors affecting individual agents.

- Performance monitoring: Track LLM latency and success rates for each agent, allowing targeted optimizations.

For a deeper look at how these capabilities are implemented in practice, refer to the WSO2 AI Gateway overview.

Practical implementation: WSO2 AI Gateway and WSO2 Agent ID in action

Let's look at how this can be put into practice with two leading technologies:

WSO2 Agent ID: The identity layer

WSO2 Agent ID provides the crucial identity layer for AI agents. It allows you to:

- Provision and manage unique identifiers and attributes for each AI agent: WSO2 Agent ID defines and manages unique IDs and comprehensive attributes like assigned roles (e.g., "customer-service-agent") and critical metadata for each agent.

- Issue and manage agent authentication credentials: Securely generate and manage credentials agents use to prove their identity.

- Establish verifiable trust, securely communicating identity and attributes to the AI Gateway: When an agent makes a request, its identity and assigned roles and critical attributes are securely asserted and confirmed. This deep context is immediately available for the gateway to make informed, dynamic policy decisions.

WSO2 AI Gateway: The enforcement point

The WSO2 AI Gateway is specifically designed to manage and secure AI/LLM traffic, providing a robust enforcement point. When combined with WSO2 Agent ID, its capabilities are significantly enhanced, operating through a detailed, secure flow:

Here’s how they work together:

- Authentication & token: The AI agent authenticates with WSO2 Agent ID (e.g., WSO2 Identity Server/Asgardeo) to obtain a time-bound JWT access token that includes its roles and attributes.

- Request with JWT: The agent sends its request to the LLM via the WSO2 AI Gateway using the JWT token instead of embedded LLM keys.

- Gateway interception: The WSO2 AI Gateway intercepts the request.

- Verification & ingestion: The gateway validates the JWT, confirming the agent's identity and extracting (ingesting) its roles/attributes for policy decisions.

- Policy enforcement: The gateway enforces agent-specific policies (Routing, Rate Limiting, Prompt Guardrails/Filtering, Logging).

- Credential injection & forwarding: The gateway dynamically injects securely managed LLM API credentials (not held by the agent) and forwards the enriched request to the target LLM.

- Response guardrails: The gateway intercepts the LLM response to apply post-processing guardrails (Content Moderation,

- Data Masking/Redaction, Format Transformation) before sending the secured output back to the agent.

This seamless combination of identity management from WSO2 Agent ID and the powerful, integrated enforcement capabilities of the WSO2 AI Gateway creates a highly secure, governable, and cost-effective AI ecosystem, centralizing control over both inbound and outbound LLM interactions.

For a more practical guide, refer to the following tutorials:

→ Tutorial 1: WSO2 Agent ID with WSO2 AI Gateway

→ Tutorial 2: WSO2 Agent ID with WSO2 Kong Gateway

Future implications and the road ahead

The integration of AI agent identity with AI gateways is not merely an incremental improvement; it's a foundational shift towards truly governable AI systems. As AI agents become more autonomous and interact in increasingly complex multi-agent environments, the ability to uniquely identify, authenticate, and control each agent will be paramount. This approach paves the way for advanced AI governance models, standardized agent interactions, and ultimately, more responsible and beneficial AI deployments.

Conclusion

The journey towards fully realizing the potential of AI gateways hinges on our ability to give each AI agent a unique identity. By providing this crucial context at the traffic interception point, solutions like WSO2 Agent ID and WSO2 AI Gateway transform generic traffic management into intelligent, agent-specific control. This fundamental shift enhances security, optimizes resource utilization, ensures compliance, and lays the groundwork for a more accountable and responsible AI-driven future. Organizations embracing AI must recognize that identity is not just for humans; it's the key to unlocking the true power of their AI agents.