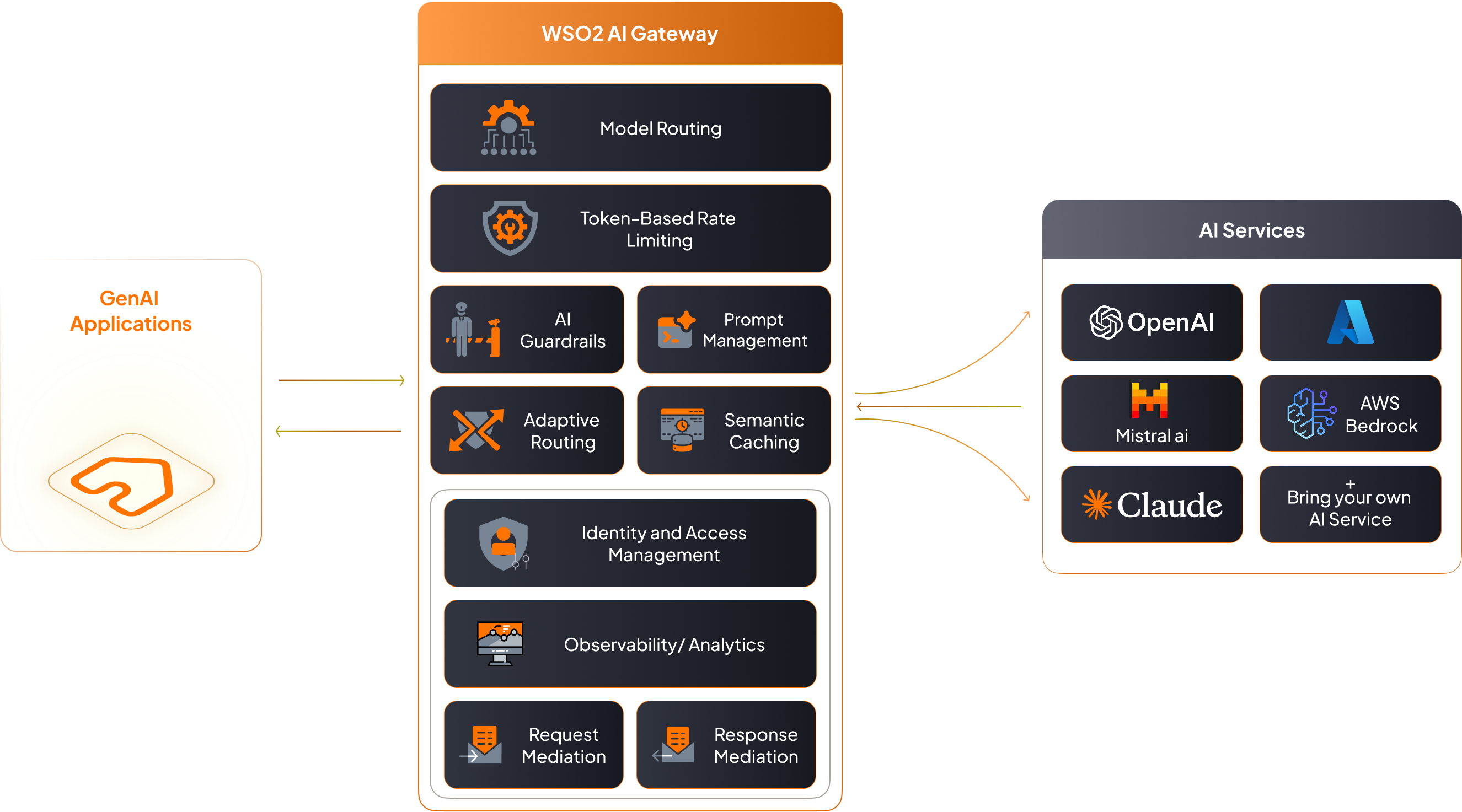

WSO2 AI Gateway: Govern Your AI APIs

WSO2 AI Gateway — industry‑leading API management and critical

AI governance with enhanced:

AI

Guardrails

AI

Observability

AI Traffic

Management

OOTB LLM

Support

Includes OpenAI, Mistral AI, Azure OpenAI, and other leading providers.

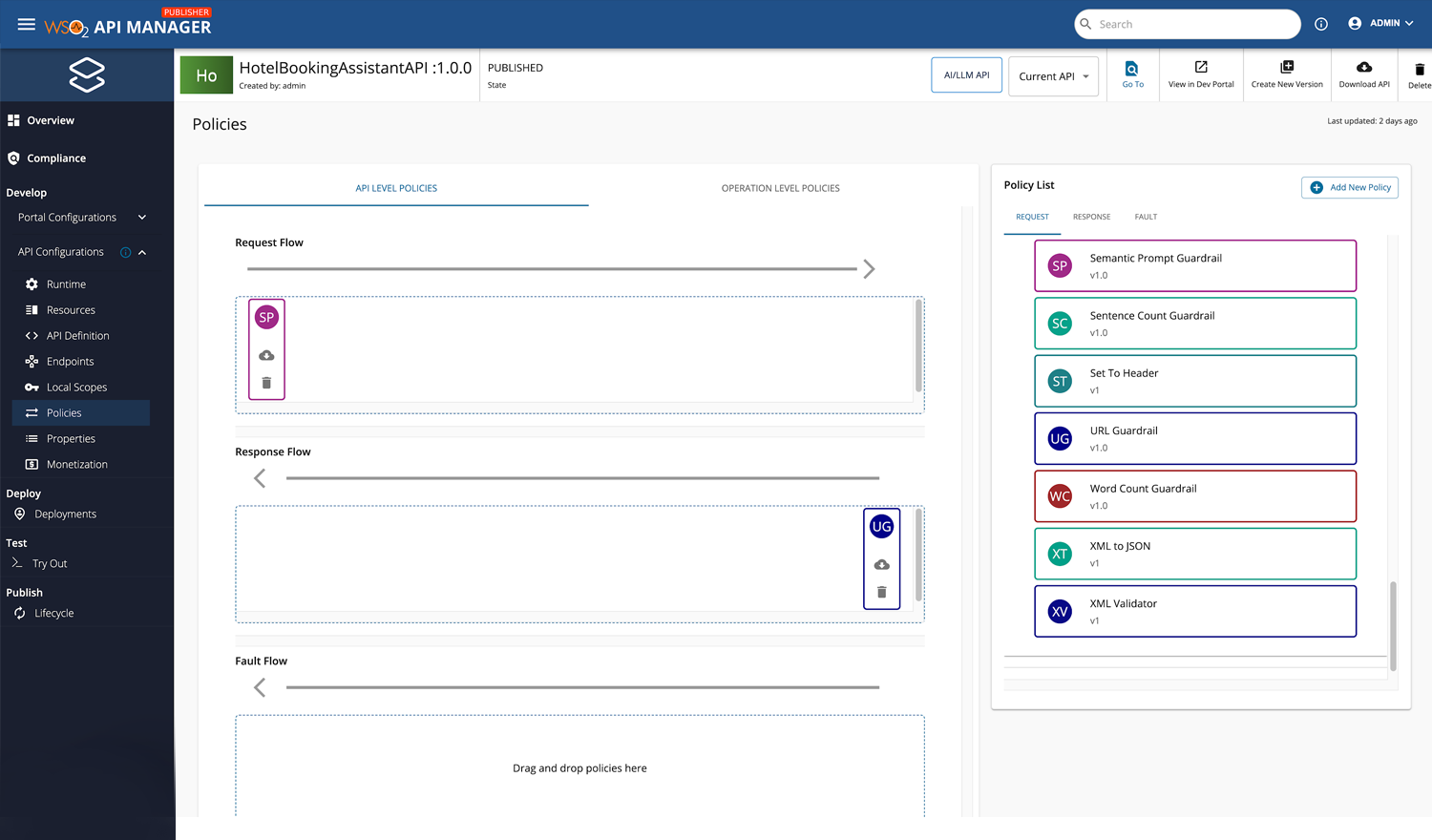

AI guardrails for safe and responsible use

Easily comply with regulations, prevent misuse, and protect your data by applying built-in and external guardrails for responsible AI use.

Out-of-the-box guardrails

Apply built-in guardrails to ensure safe and structured AI interactions—prevent harmful or inappropriate inputs with Semantic Prompt Guard, mask personal identifiable information (PII) using Prompt Guard, enforce response structure with JSON Schema and Regex Validation, control output length with word and sentence limits, and validate links with URL checks.

Content safety

Detects and filters hate speech, violence, sexual content, self-harm, personal information leaks, jailbreak attempts, unsupported topics, and other harmful content in both text and images—supported via external providers.

Supported external providers

- Azure Content Safety

- AWS Bedrock Guardrails

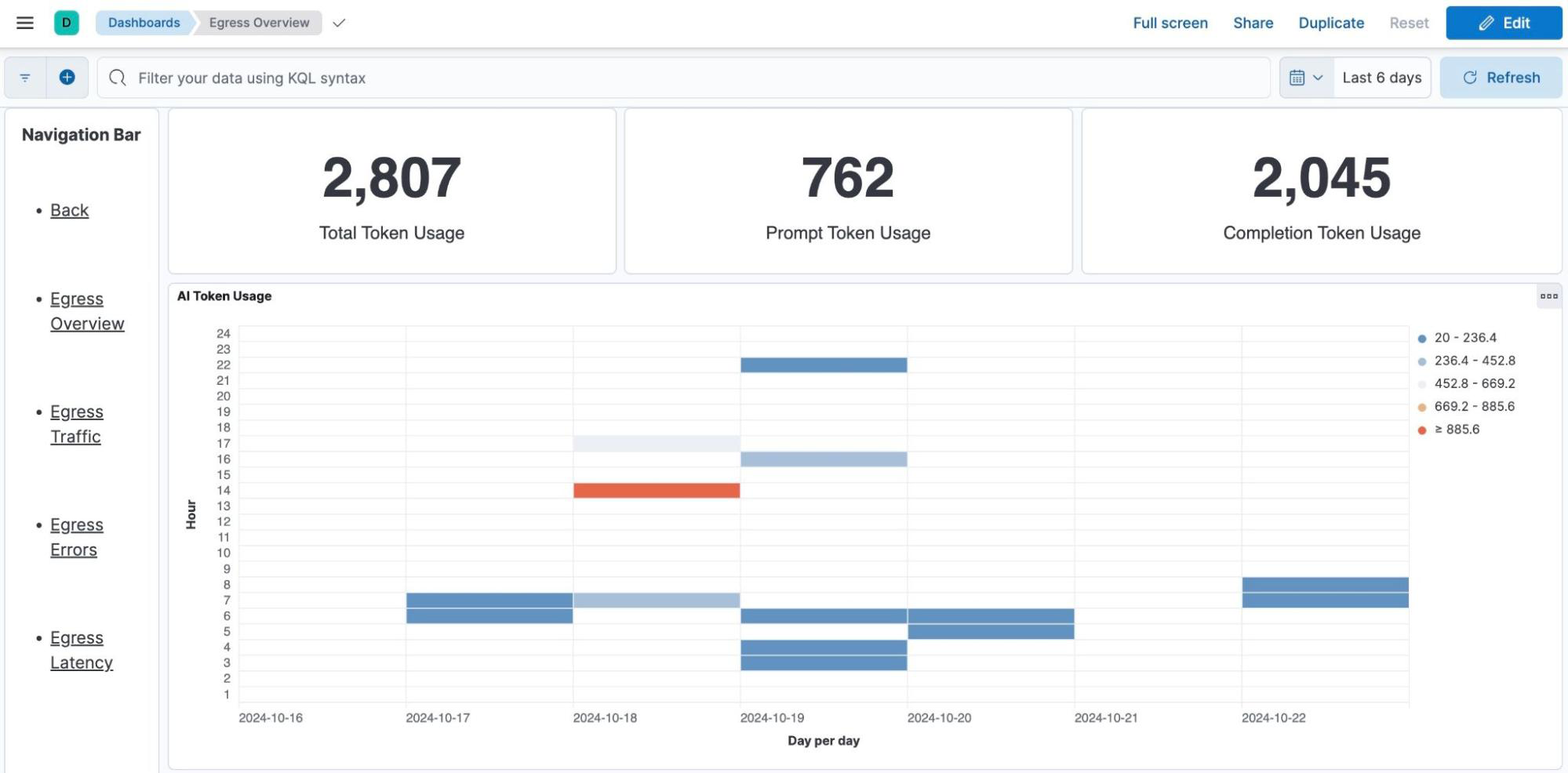

Observability and governance

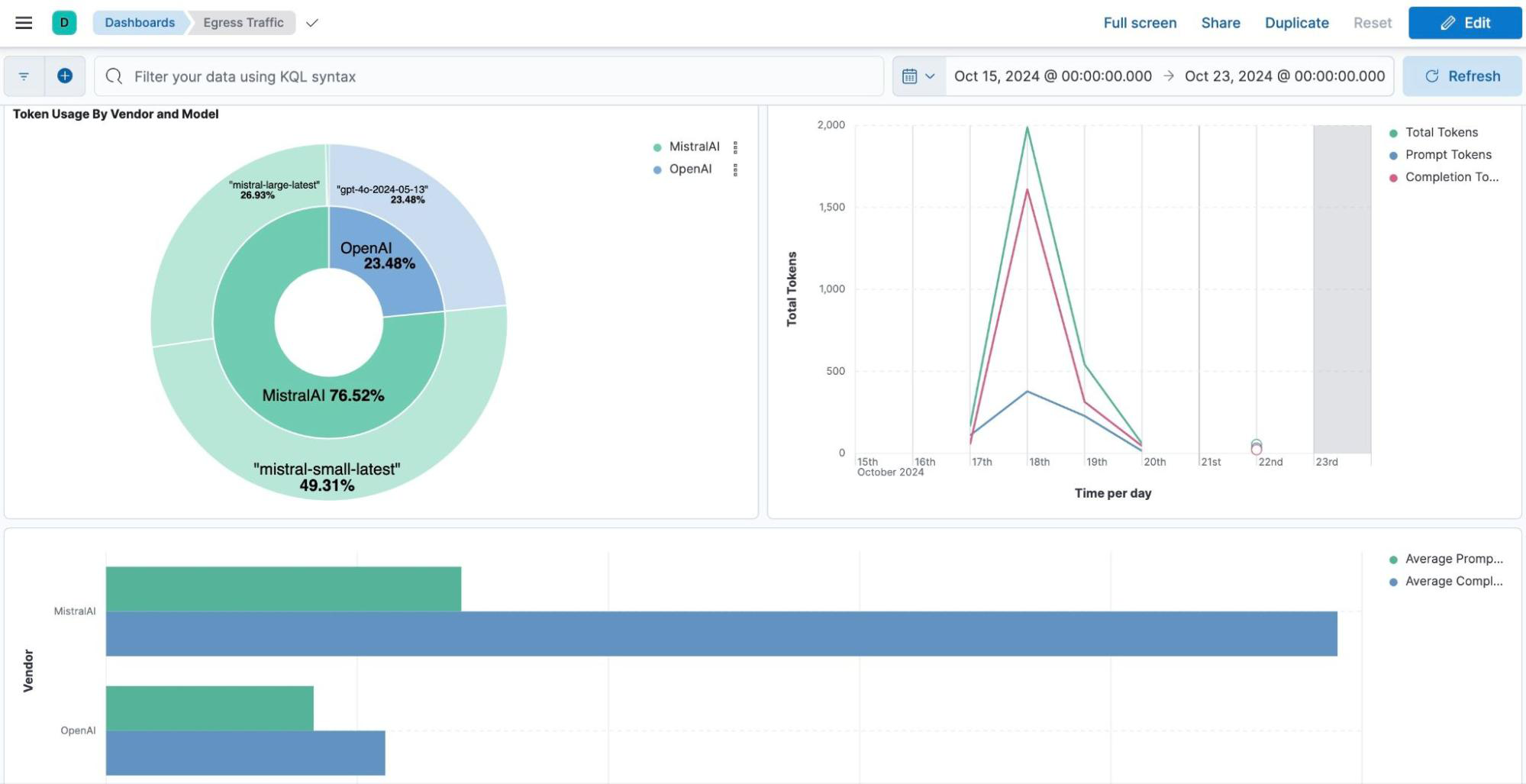

Track and summarize AI API usage for better oversight and prioritize outbound traffic. Gain comprehensive insights into GenAI usage across your organization, including detailed visibility into how different LLM providers and services are utilized. Identifying patterns of usage will empower your teams with data-driven decision-making to optimize resource allocation, model selection, and overall AI integration.

Usage tracking

Implement robust mechanisms to monitor and summarize AI API usage.

Traffic prioritization

Develop strategies for managing and prioritizing GenAI usage with better oversight of calls to external LLM services.

Granular rate limiting

Monitor backend rate limits based on requests and token count, and manage subscription-level access controls for fair usage.

Control and quality of service

Gaining visibility and control over GenAI services is essential for businesses to effectively scale their AI use. As AI adoption grows, managing usage patterns, ensuring compliance, and optimizing performance become critical to meeting customer expectations and operational goals. With robust visibility, businesses can monitor AI interactions, identify opportunities for improvement, and prevent bottlenecks, enabling seamless growth without compromising service quality or efficiency.

Unified management

Create a comprehensive approach to managing AI API interactions.

Provider flexibility

Seamlessly integrate well-known and custom LLM service providers.

Prompt management

Apply prompt templates for consistent input formatting and add roles or context decorators (e.g., "Act as a tutor") to control LLM tone.

Cost and performance optimization

Optimizing AI usage enables businesses to achieve more with less, maximising the value from their AI investments. By fine-tuning resource allocation, managing workloads efficiently, and leveraging scalable solutions, businesses can reduce unnecessary expenses while maintaining high performance, improving efficiency, and enabling organizations to reinvest savings into innovation and growth.

Operational visibility and cost awareness

Gain transparent insight into how LLMs are accessed and used—supporting better governance and cost optimization.

Smart resource utilization

Allocate resources efficiently by tracking usage patterns, token consumption, and aligning API expenses with business value.

Performance insights and semantic caching

Monitor model-level performance with detailed analytics and reduce redundant calls using semantic caching for faster, more cost-effective responses.

Benefits of WSO2 AI Gateway

Utilize full lifecycle API management and governance to give control and visibility of AI services, while effectively navigating the complexity of AI APIs for everyone in your organization.

Administrators

- Efficiently manage popular LLM providers.

- Onboard custom AI providers tailored to unique business needs.

- Track detailed analytics, including token usage by vendor or model, using intuitive dashboards—optimizing for usage patterns and forecasting costs.

Developers

- Predefined LLM providers simplify API creation and deployment, accelerating the development lifecycle.

- Enforce backend rate limits by defining token and request thresholds, safeguarding against excessive usage costs.

Product managers

- Introduce subscription rate limits to ensure equitable access for API consumers.

- Control access via subscription policies, creating structured plans that balance cost with access tiers.

Get Started with WSO2 API Manager and Bijira

Unlock the full potential of your AI APIs with enterprise-grade governance, safety, and performance tools.

Take control of your GenAI adoption—on-prem or in the cloud.