Unified Control of AI and MCP Services

A single control plane for all AI traffic, covering both LLM consumption (outbound) and MCP tool exposure (inbound). Apply guardrails, monitor usage, optimize costs, and govern agent access under one model.

Why it matters

GenAI and autonomous agents are reshaping applications, but without governance, they introduce risk:

Protect sensitive data

LLM prompts may leak sensitive information.

Deliver safe AI

outputs

Model outputs can be unsafe, non-compliant, or unpredictable.

Make AI spend predictable

AI investments become expensive without token-level visibility.

Remove integration complexity

Agents require brittle, custom wrappers to call enterprise APIs.

Unify AI traffic control

AI traffic operates in silos, without centralized oversight.

WSO2 AI Gateway unifies governance for all AI interactions (LLM calls, AI API usage, and agent access

through MCP) ensuring safety, consistency, and operational control.

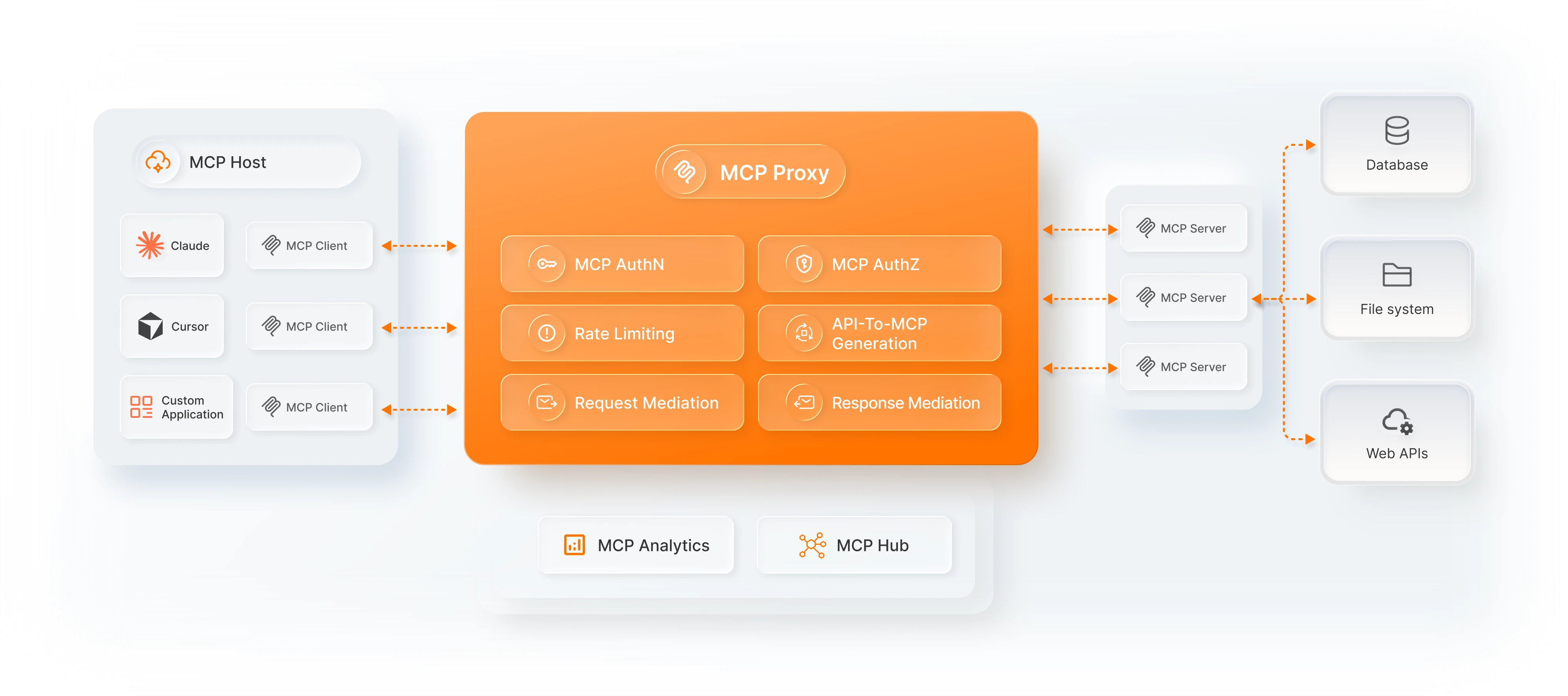

MCP governance and control

Enable AI agents to safely access internal systems using the Model Context Protocol (MCP).

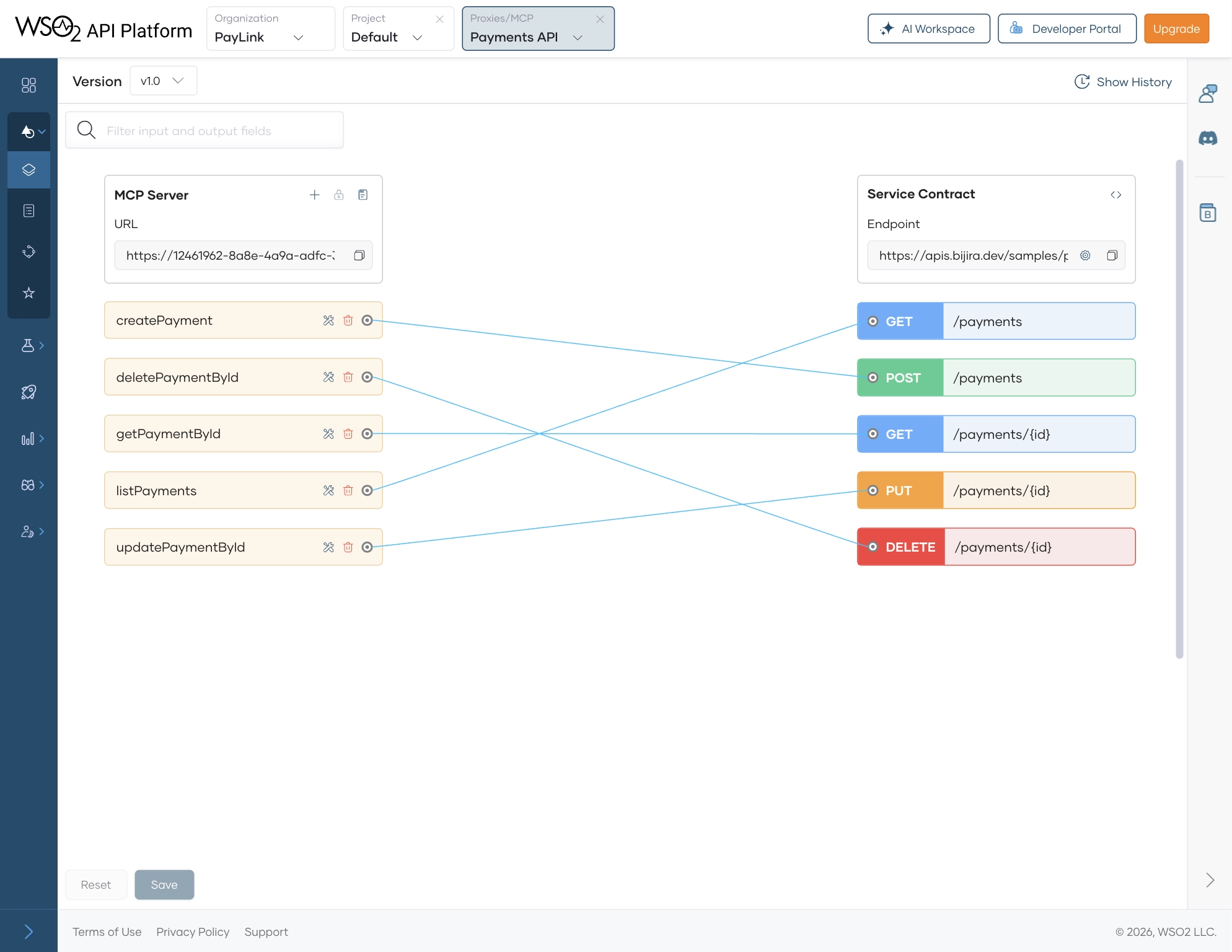

Convert any REST API into an MCP-compatible server in minutes. This bridges your APIs into agent workflows without sacrificing governance.

- No wrappers or custom integration code

- Expose backend services or existing API proxies as MCP tools

- Make enterprise capabilities discoverable and agent-ready

Bring all MCP servers — whether you create them or consume them — under one governance layer. Govern all agent-accessible capabilities from a single platform.

- Proxy external MCP servers through WSO2

- Apply authentication and rate limits

- Monitor tool-level and server-level usage

- Maintain centralized visibility

Bring all MCP servers — whether you create them or consume them — under one governance layer. Govern all agent-accessible capabilities from a single platform.

- Proxy external MCP servers through WSO2

- Apply authentication and rate limits

- Monitor tool-level and server-level usage

- Maintain centralized visibility

Apply the same enterprise protections used for APIs to all agent interactions. Ensure every agent call is safe, compliant, and controlled.

- Authentication and access control

- Throttling and quotas

- AI guardrails and schema validation

- Detailed logging and anomaly monitoring

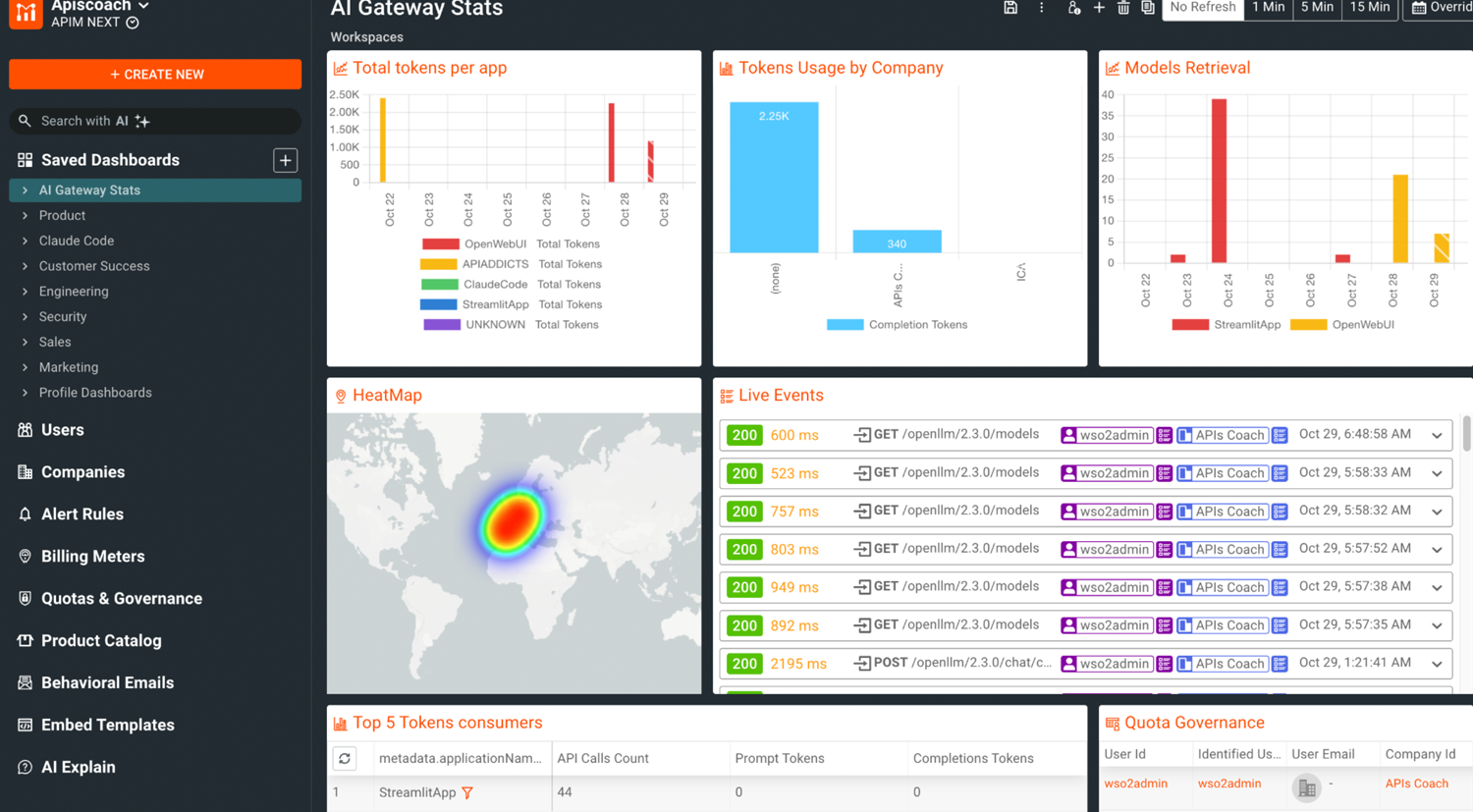

Track and analyze all AI traffic: LLM usage, AI API calls, and agent interactions. Visibility across the full AI ecosystem — in one place.

- Visualize tool-level and API-level usage

- Detect anomalous agent patterns

- Understand how agents consume enterprise data

- Drive optimization with Moesif-powered analytics

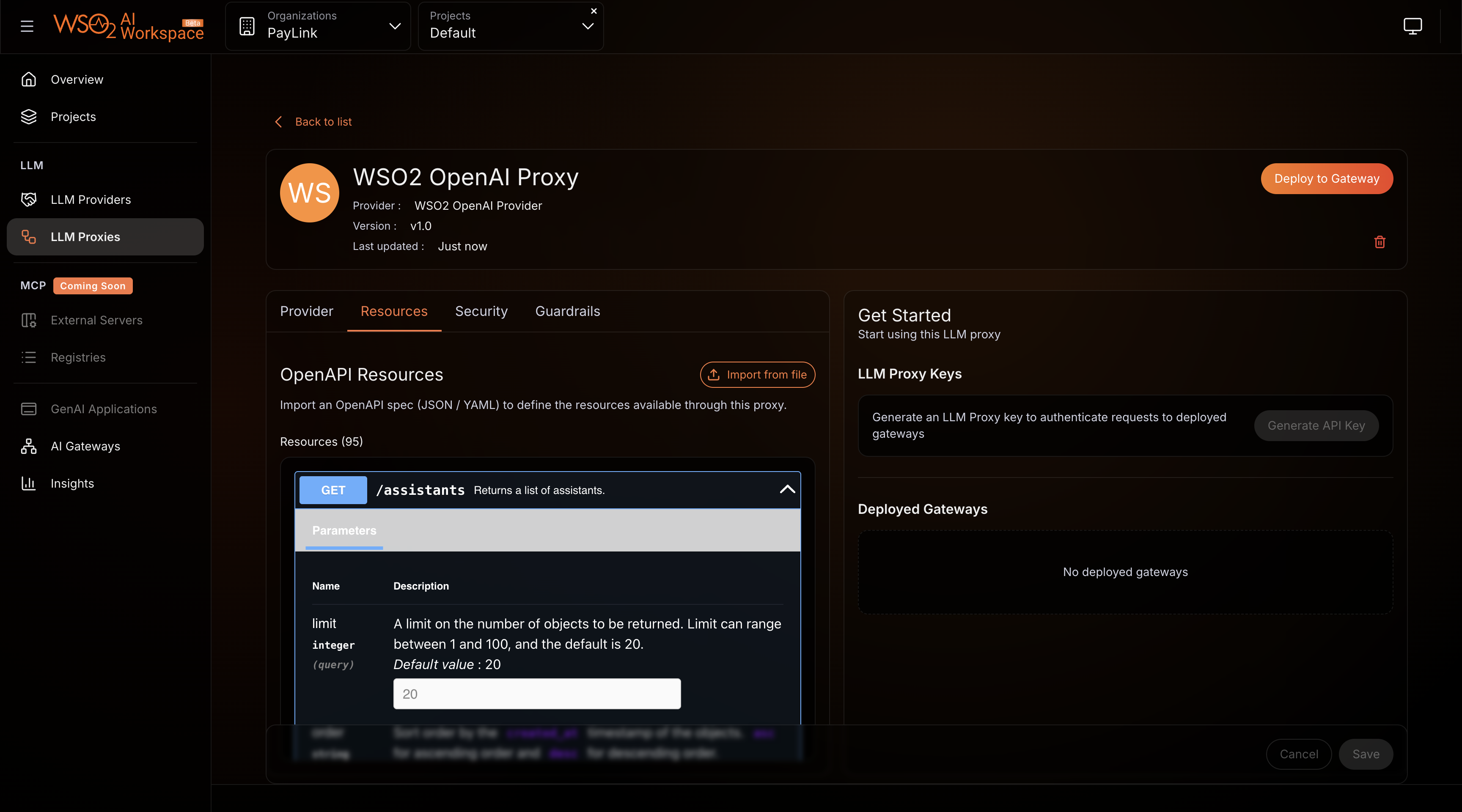

LLM and AI API governance

Secure, observe, and optimize all interactions with third-party and custom AI models.

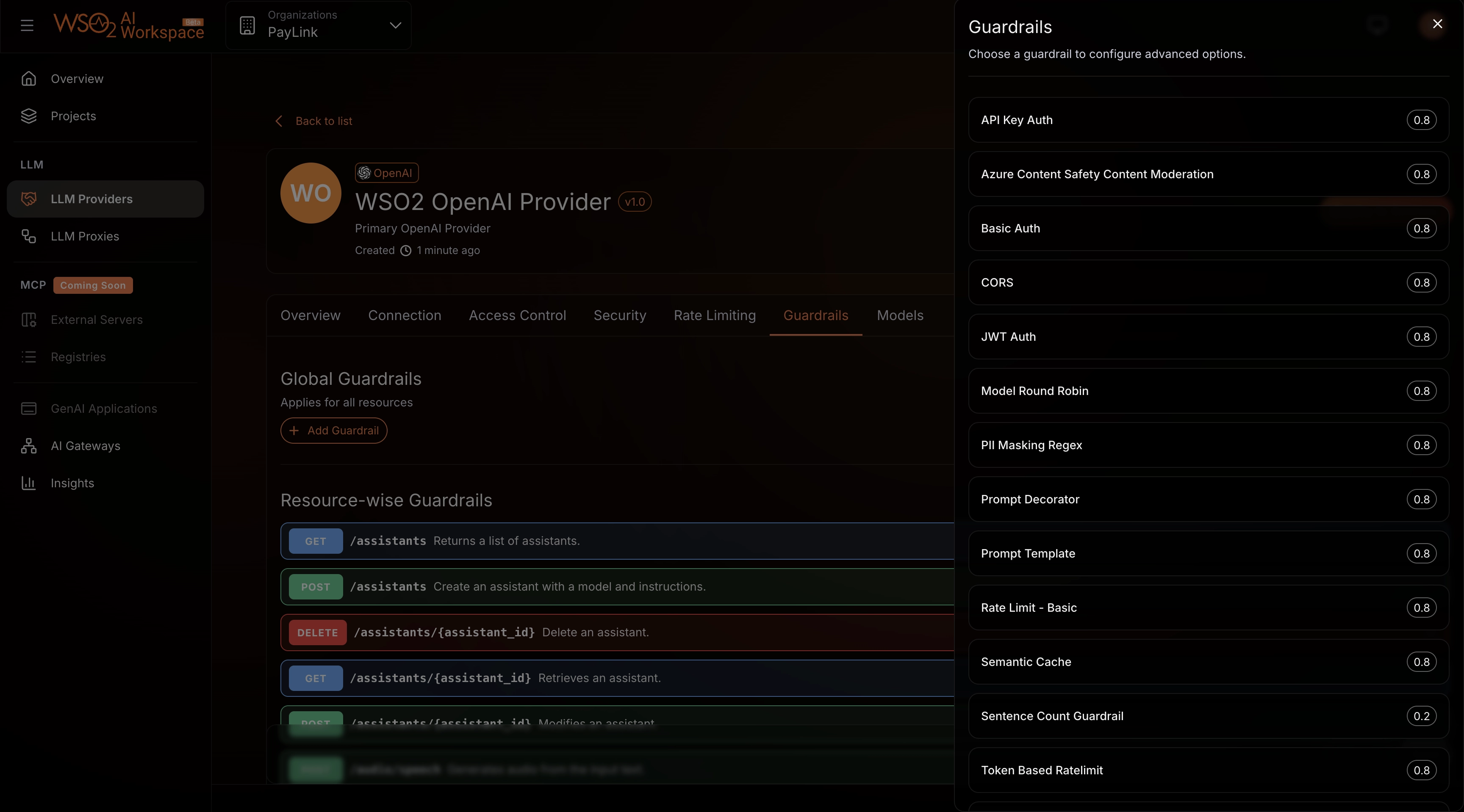

AI guardrails and safety

Protect your organization from unsafe prompts and responses with built-in and external guardrails. Ensure safe, structured, and compliant AI interactions across teams and use cases.

- Semantic prompt validation

- PII masking and redaction

- JSON Schema and regex-based output enforcement

- Output-length, role, and tone controls

- URL and content validation

- Integration with external content safety providers such as Azure Content Safety and AWS Bedrock Guardrails

LLM observability and usage governance

Gain deep visibility into how LLMs and AI APIs are used across your organization. Turn AI usage from opaque and risky into transparent and governable.

- Track token consumption, cost, and provider usage

- Monitor model performance and latency

- Identify heavy call patterns and optimization opportunities

- Prioritize outbound AI traffic based on business impact

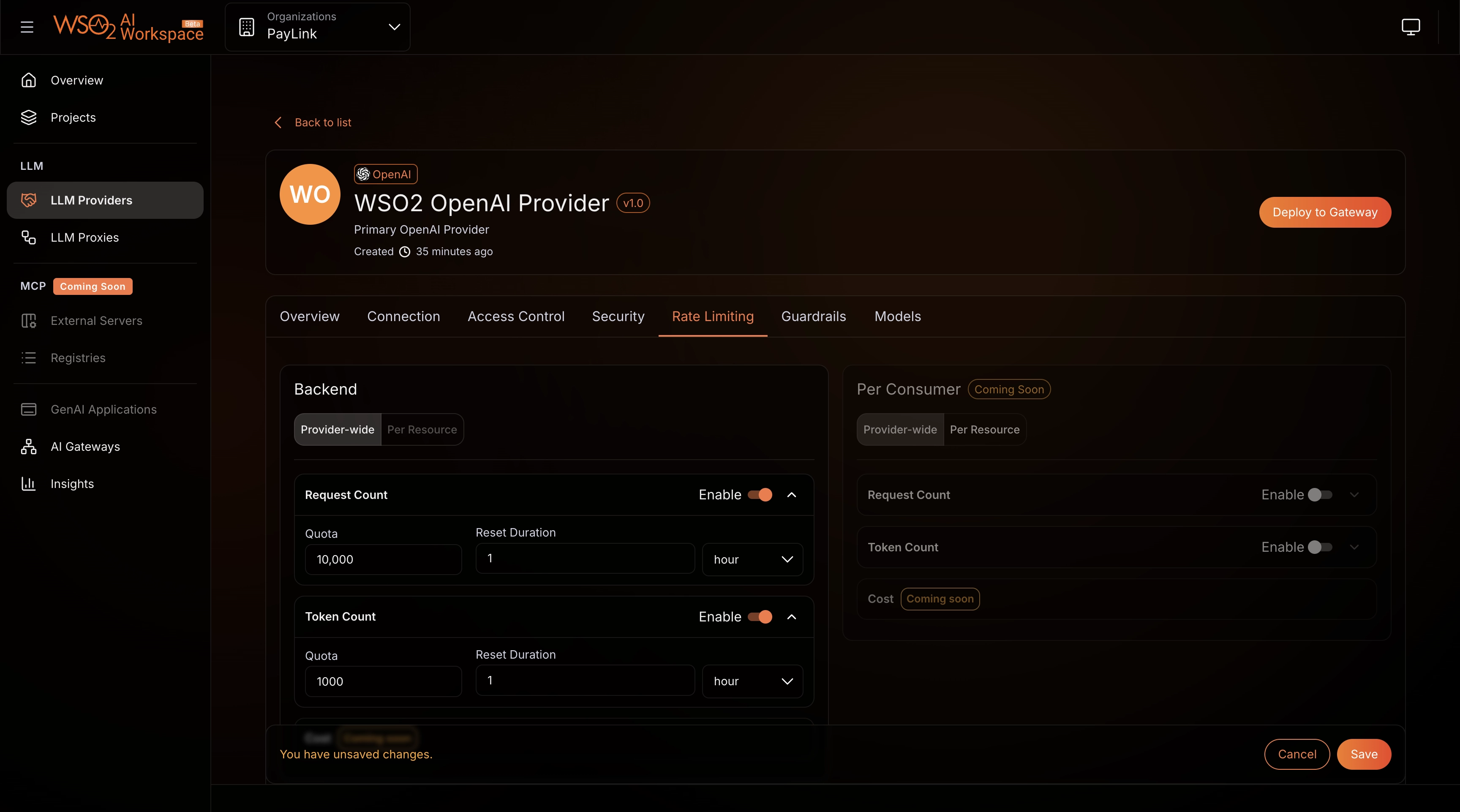

- Enforce token-level and subscription-level rate limits

Prompt management and quality of service

Standardize how teams interact with LLMs. Deliver predictable AI experiences with enterprise governance.

- Apply prompt templates for consistent format

- Set instructions and roles like “Act as a tutor”

- Enforce unified policies across all providers and services

- Ensure predictable, repeatable outputs across teams

Cost and performance optimization

Prevent runaway LLM costs and improve performance. Optimize value from every AI interaction.

- Monitor usage patterns and forecast spend

- Allocate tokens intelligently to teams and workloads

- Use semantic caching to reduce repetitive calls

- Improve latency and avoid rate-limit bottlenecks

How it fits into the platform

The WSO2 AI Gateway works as part of WSO2’s larger API and integration ecosystem, alongside:

Universal Gateway

Kubernetes Gateway

Immutable Gateway

Event

Gateway

All governed through the same control plane, on-premise or cloud.

Your APIs, LLMs, AI services, and agent access all share one governance model, one analytics view, one lifecycle.