One Workspace for Everything AI

A dedicated portal for managing AI gateways, LLM providers, MCP servers, and guardrails, without the constraints of traditional API management. Two purpose-built views give admins global governance controls and AI developers app-specific configurations.

Why it matters

AI services are different from traditional APIs. This means they need their own management layer, specifically built to govern and manage them. LLM provider credentials, proxy configurations, guardrails, and cost controls don't belong buried inside a general API management console. WSO2’s AI Workspace strips away the API noise and gives you a focused interface for everything AI.

Two views for two roles

Admins govern AI usage globally, controlling which LLM endpoints are exposed, setting cost caps, and enforcing guardrails across the organization. AI developers configure app-specific controls for agents, RAG, and GenAI applications.

LLM provider connections

Connect OpenAI, Anthropic, Azure OpenAI, Google Gemini, Mistral AI, and more. Manage credentials for all providers from one place to fight back against credential sprawl and the cost and security issues that come with it.

MCP server management

Register, discover, and govern MCP servers from a single place. Control access to MCP tools, resources, and prompts with necessary tooling around authentication, rate limits, and usage insights.

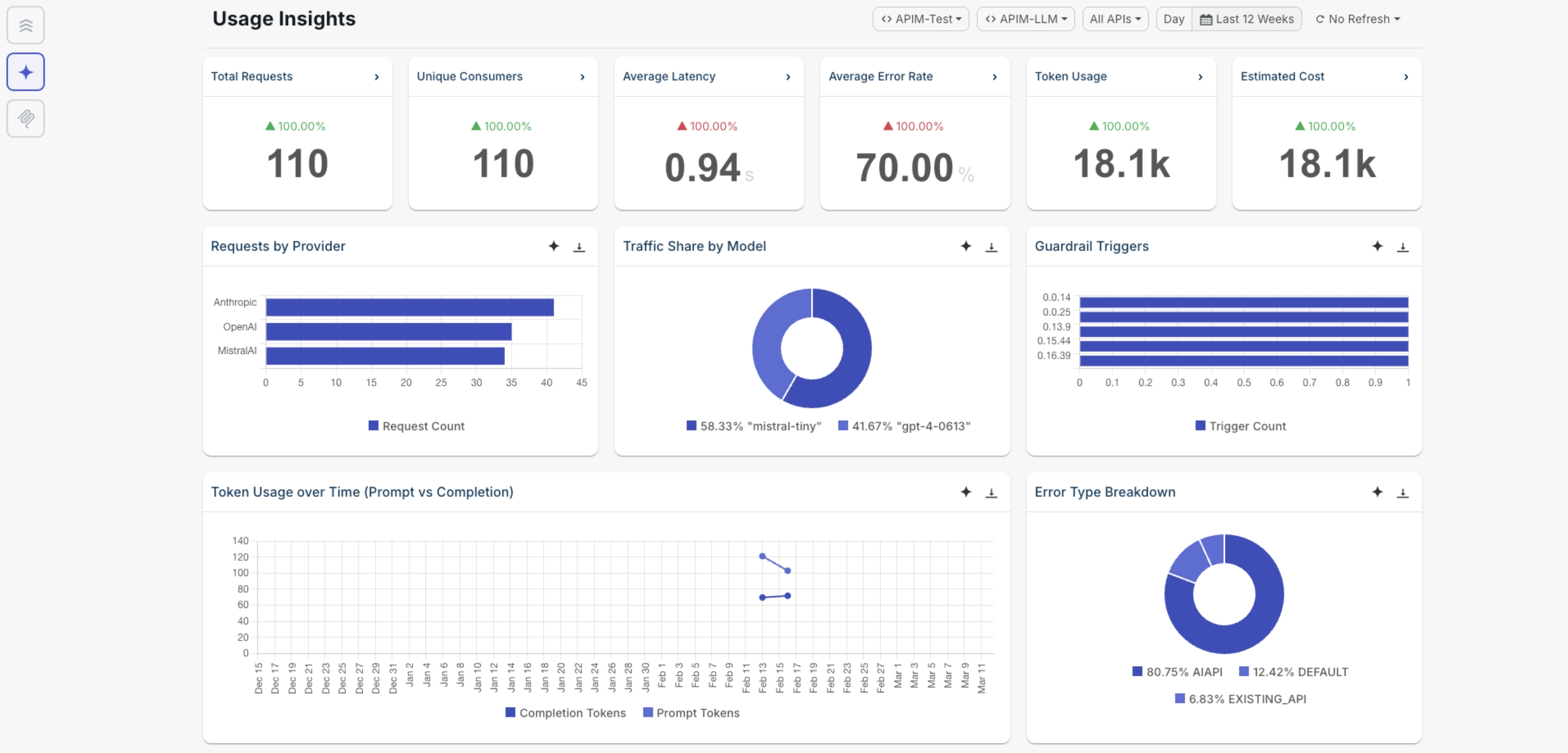

AI insights built in

Track token usage, estimated costs, requests by provider, traffic by model, guardrail triggers, and error breakdowns from a single dashboard. AI metrics are different than your API metrics, our tooling matches the tech.

WSO2 AI Workspace gives AI teams a focused interface to manage every aspect of their AI infrastructure without navigating the full API management console.

Key capabilities

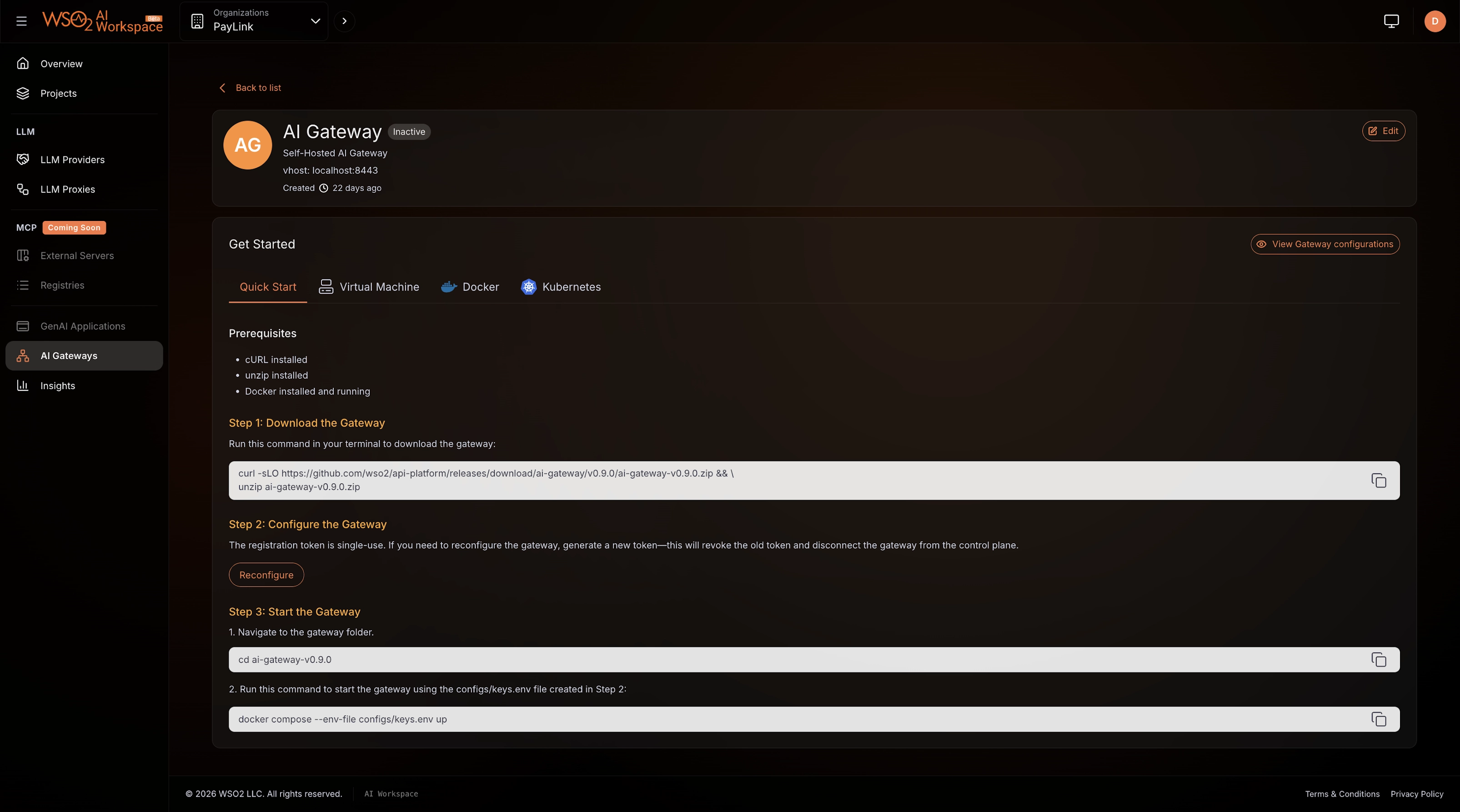

Deploy and manage AI gateways

Set up AI gateway runtimes that process and route requests between your applications and LLM providers or MCP servers. Deploy on your own infrastructure and connect back to the AI Workspace for centralized governance.

- Deploy via Quick Start, Virtual Machine, Docker, or Kubernetes

- Based on Envoy Proxy with Go-based policies and guardrails

- Python-based policies and guardrails coming April 2026

- Route traffic to all your LLM providers and MCP servers from a single gateway

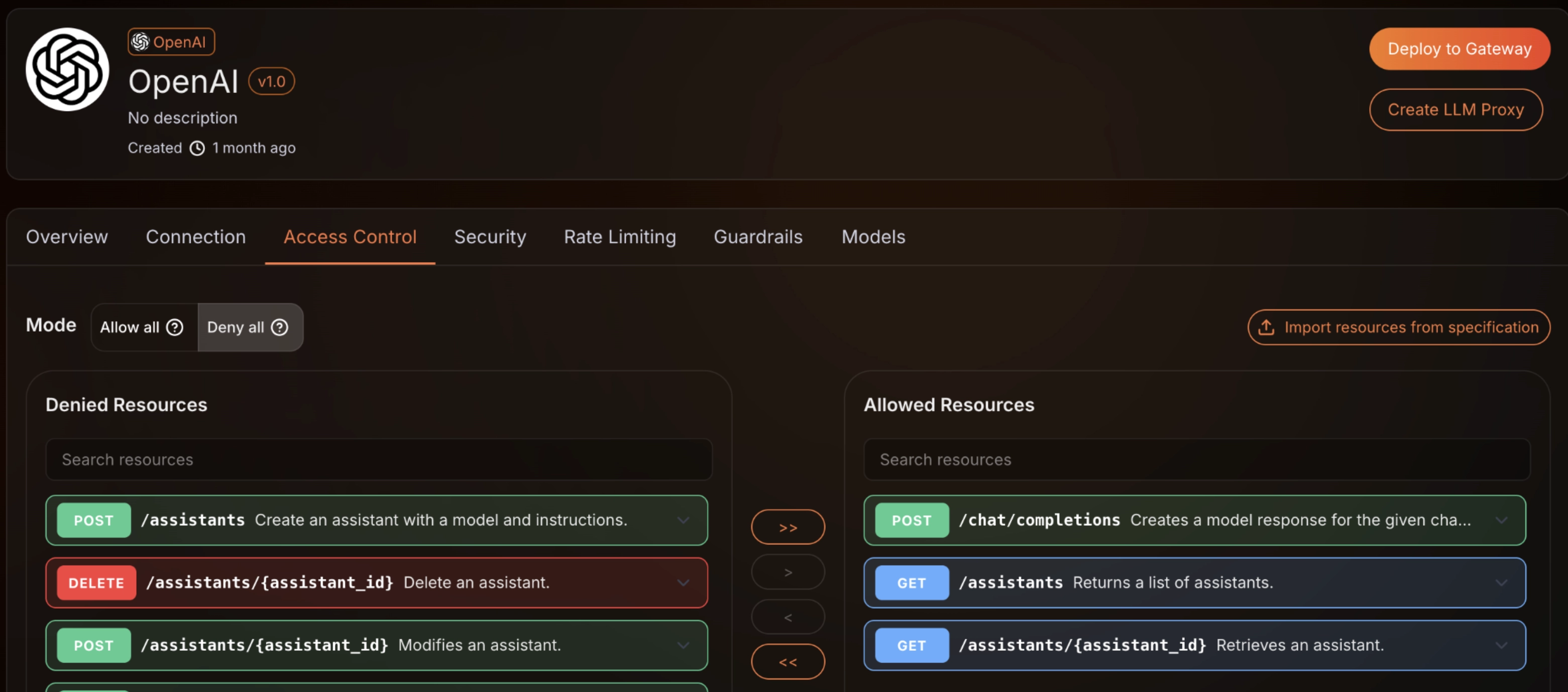

Control access to LLMs

Connect LLM providers, create managed proxy endpoints, and control exactly which models and operations each application can use. Each proxy gets its own API keys, rate limits, and access rules. Set allow or deny at the resource level so teams only reach the endpoints they need.

- OpenAI, Anthropic, Azure OpenAI, Google Gemini, Mistral AI (AWS Bedrock coming soon)

- Per-provider configuration: connection, security, rate limiting, guardrails, and model selection

- Allow/deny mode with granular resource-level control per provider

- Backend and per-consumer enforcement for fair usage distribution

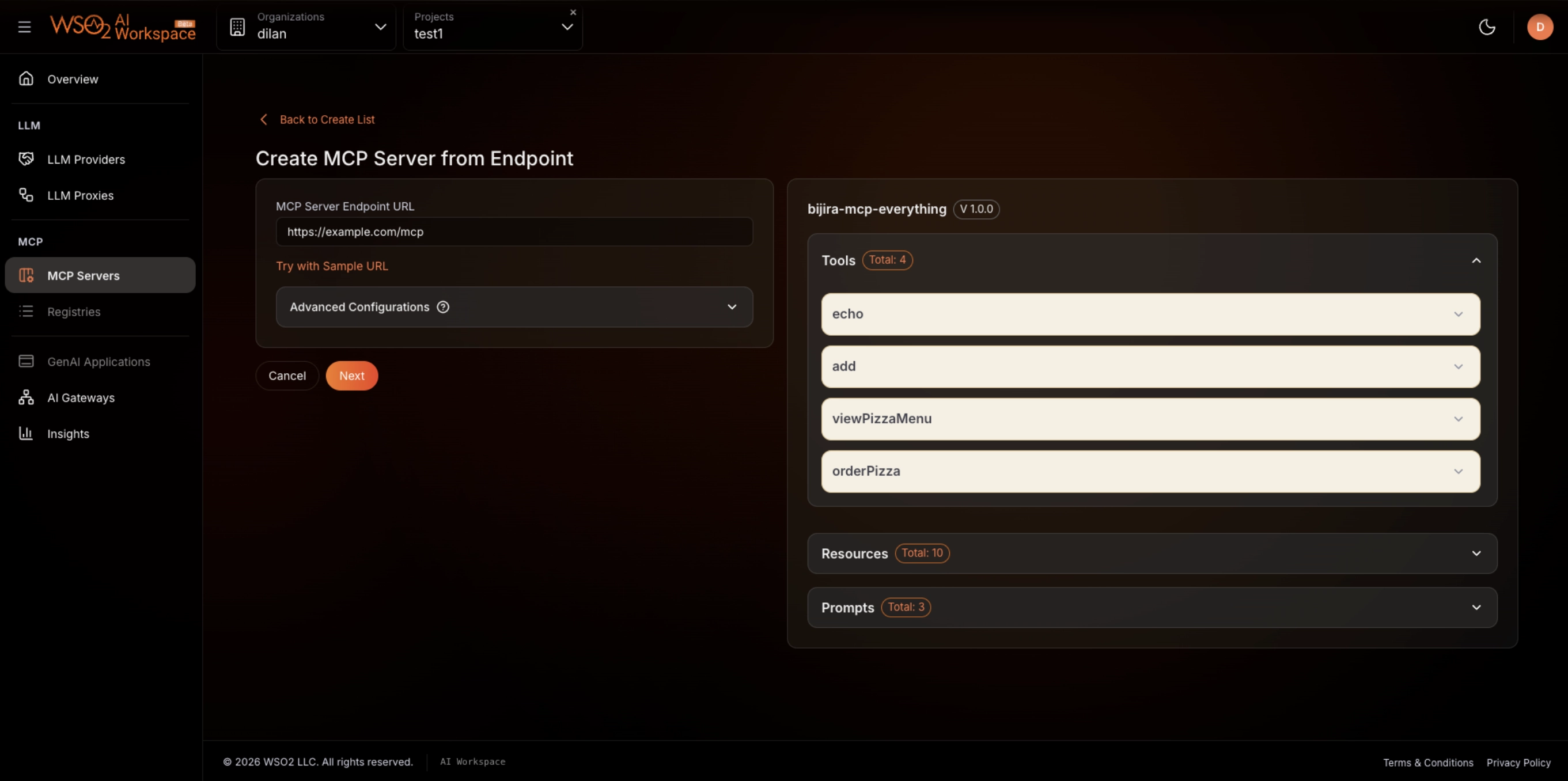

Govern MCP servers and tools

Register MCP servers by endpoint URL and manage access to their tools, resources, and prompts. Enforce authentication, authorization, and rate limits on MCP traffic. Give AI agents and developers a governed path to discover and consume your organization's capabilities.

- Create MCP servers from endpoint URLs with auto-discovered tools, resources, and prompts

- Enforce authN/authZ and rate limits on MCP tool access

- Centralized registry for AI agents and developers to discover available MCP servers

- Insights into MCP usage patterns and consumption

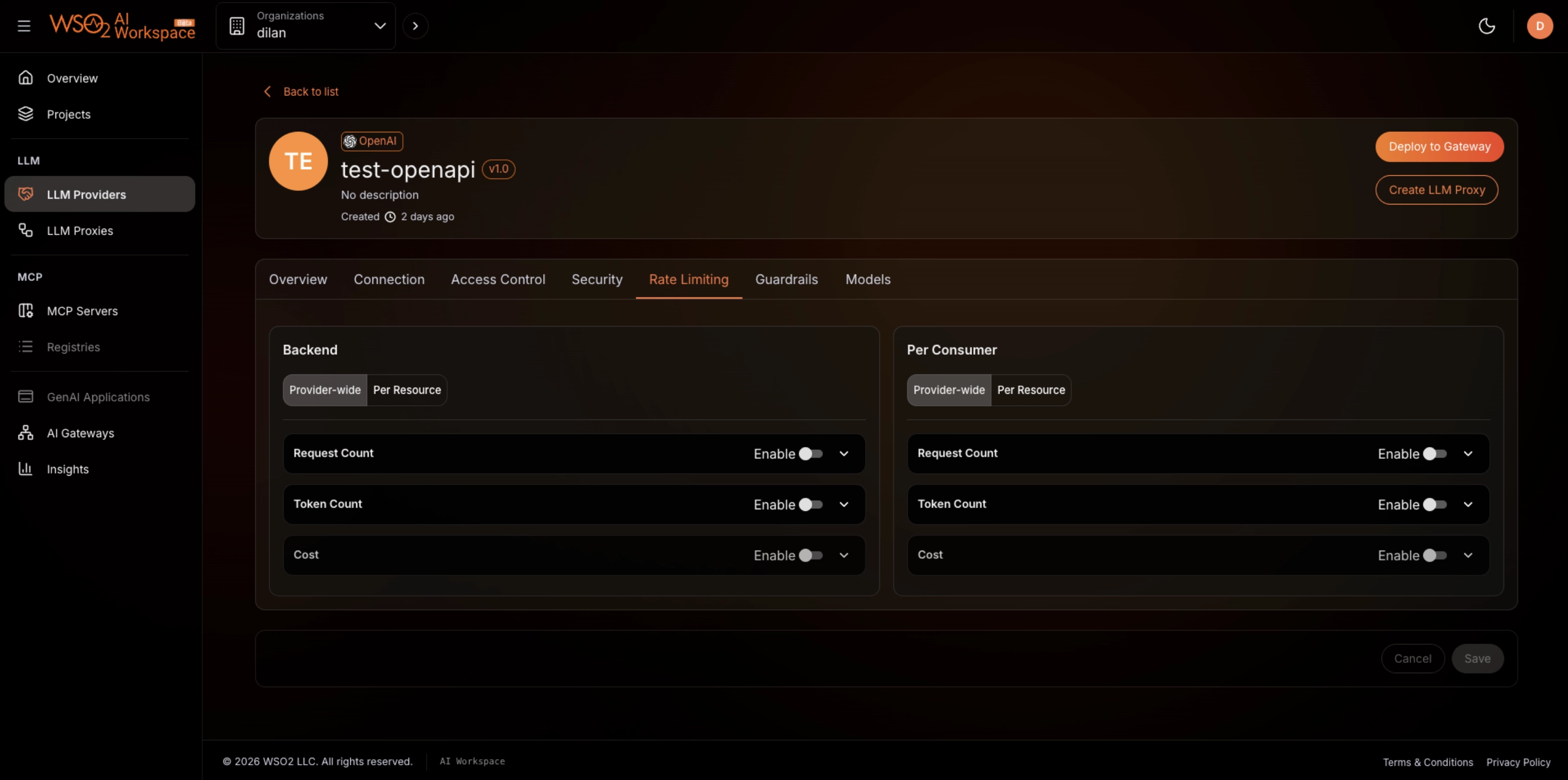

Cap costs and enforce quotas

Set limits on request count, token count, and cost at both the backend and per-consumer level. Prevent runaway LLM spend, enforce fair usage distribution, and stop abuse before it hits your budget.

- Request count, token count, and cost caps per provider

- Backend-wide and per-consumer quota enforcement

- Provider-wide or per-resource granularity

- Visibility into quota consumption patterns via AI Insights

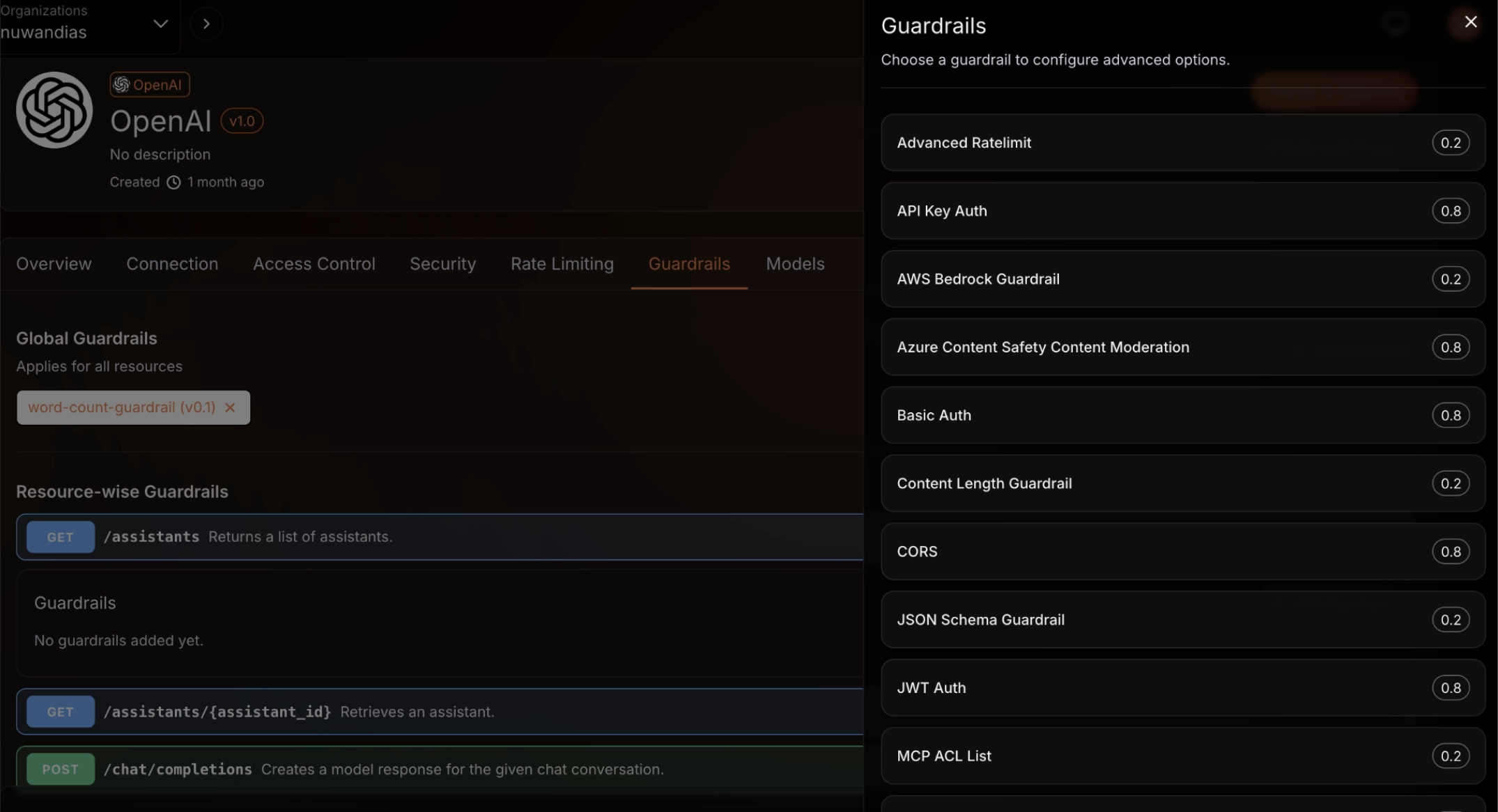

Enforce guardrails across LLMs and MCP

Apply guardrails globally or per individual endpoint across both LLM proxies and MCP servers. Choose from built-in guardrails and third-party integrations for compliance and governance.

- Built-in guardrails, such as content length validation, JSON schema guardrail, advanced rate limiting, CORS

- Third-party guardrail support, including AWS Bedrock Guardrails, Azure Content Safety Content Moderation

- Authentication guardrails to enforce API Key Auth, Basic Auth, JWT Auth

- MCP ACL List for controlling tool-level access

- Global guardrails and resource-wise guardrails for layered enforcement

Track usage and costs with AI insights

Understand usage patterns, quota consumption, and LLM costs from a single dashboard. Track total requests, unique consumers, average latency, error rates, token usage, and estimated cost across all providers.

- Requests by provider, traffic share by model, and guardrail trigger analytics

- Token usage over time with prompt vs. completion breakdown

- Error type breakdown and average error rate monitoring

- Estimated cost tracking across LLM endpoints

Benefits at a glance

Focused AI management

A dedicated interface for AI teams, separate from general API management.

Two views, two roles

Admins govern globally. AI developers configure per-app controls.

LLM and MCP in one place

Manage LLM providers, proxies, and MCP servers from a single workspace.

Granular access control

Allow or deny specific endpoints and tools per application or team across LLMs and MCP.

Cost visibility and control

Insights into token, request, and cost caps prevent budget surprises.

Deploy anywhere

Support for three distinct types of deployments including SaaS, self-hosted, and hybrid models.

WSO2 AI Workspace is your control surface for AI services. Manage LLM providers, configure proxy endpoints, govern MCP servers, apply guardrails, and monitor AI traffic from a single, focused interface.