Ingress NGINX Retirement: Switching to WSO2 Kubernetes Gateway

- Md Abu Sakib

- Consultant, Engineering, WSO2

The most widely deployed Kubernetes Ingress controller, Ingress NGINX, is going to be retired after March 2026. Only a handful of maintainers have been sustaining the project for years. It is impractical for a project of such stature and scope with such inadequate maintainership to reliably bear the security, scale, and governance requisites of modern applications. The retirement also corroborates the larger shift in the Kubernetes ecosystem that is striving for the modern replacement of Ingress: the Gateway API.

In this post, we will discuss the state of affairs leading to the retirement, and how WSO2 Kubernetes Gateway provides a future-proof alternative, through a standards-first implementation with enterprise-grade API management and AI-aware capabilities.

What is Ingress NGINX?

Ingress NGINX is an open source Kubernetes Ingress controller, an example and the most popular implementation of the Ingress API. It runs as a set of pods in a cluster and acts as an entrypoint for HTTP and HTTPs traffic coming from outside the Kubernetes environment.

Upon creating or updating an Ingress resource, the controller:

- Reconciles the configuration.

- Generates an appropriate NGINX configuration file.

- Applies to the NGINX instance to route incoming requests to backend services.

It implements several core Ingress API behaviors, like host-based routing, TLS termination, and load balancing.

It also exposes a large set of extended capabilities through annotations to allow URL rewrites, authentication integrations, rate limiting, and so on. Ingress NGINX directly maps these extensions onto NGINX directives; this makes the controller both flexible and tightly coupled to NGINX as its data plane.

The reconciliation loop in Ingress NGINX observes changes to Ingress, Service, and Secret resources and regenerates the NGINX configuration accordingly. Thus, cluster changes are adapted without manual efforts.

What is the Kubernetes Gateway API?

The Gateway API, as a set of Kubernetes Custom Resource Definitions (CRDs), models L4 and L7 traffic routing in a structured, extensible way. It formally specifies how traffic enters, moves through, and gets exposed from Kubernetes clusters.

The Gateway API, contrary to single-resource Ingress API, organizes traffic management into multiple bespoke resources. Each resource describes different layers of responsibility in the networking stack:

- GatewayClass defines the type of load balancer or proxy an implementation supports.

- Gateway, an instantiation of GatewayClass, represents a network endpoint where traffic is received.

- Route resources like HTTPRoute or TCPRoute attach to a Gateway to describe how incoming traffic should be matched and forwarded to backend services.

The Gateway API also introduces a standard pattern for attaching policies to Gateways or Routes. You can configure how traffic is processed, authenticated, and more, while maintaining separation from the core routing logic. Consequently, implementations can extend functionality without altering core resource definitions.

The reasons behind Ingress NGINX retirement

Let’s summarize the prime concerns behind sunsetting the Ingress controller. In addition to technical challenges, maintenance and sustainability concerns also contributed to the retirement decision.

Flexibility becoming technical debt

Annotations markedly contributed to the early success of Ingress NGINX. Kubernetes annotations extend standard Ingress resources beyond the core Ingress specification, like customizing various particulars of traffic management and security.

As production deployments turned more complex, Ingress controllers had to interpret dozens of vendor-specific annotations per resource. It resulted in assorted behavior across versions, clusters, and even different maintainers’ usage motifs. These annotations, being freeform strings with no strong typing or schema enforcement, are impossible to validate ahead of time; the controller has to interpret all of this during reconciliation. Consequently, configurations, despite appearing valid, failed in obscure and inconsistent ways.

Security exposure through unbounded configuration

To accommodate advanced use cases, snippets allow arbitrary NGINX configuration at the resource level. While convenient and flexible, snippets created a security model the project could not sustainably regulate. Every snippet is a potential entrypoint for misconfiguration or exploitation; high severity vulnerabilities emerged as a direct repercussion. The “IngressNightmare” vulnerabilities exposed how difficult it had become to sandbox Ingress NGINX’s behavior within a safe abstraction.

Maintenance shortages and burnout

The project has relied on one or two maintainers working largely in their spare time. They have been responsible for triaging hundreds of issues, reviewing patches, managing security disclosures, and keeping up with upstream Kubernetes and NGINX changes. The contributor base never grew to the project’s adoption.

Gateway API as the modern successor to Ingress for Kubernetes networking

The Ingress API has been in frozen status, with the community concentrating all efforts and new features into the Gateway API. It brings structure, adaptability, and governance through a model that aligns with Kubernetes principles and supports requirements of modern applications.

A strongly-typed model

Routing intent, backend references, and policy attachments are all strongly typed in the Gateway API. Kubernetes can validate these fields directly, contrary to Ingress’s annotations. As a result, configurations in the Gateway API are less error-prone, easier to understand, and more portable across implementations.

For example, Kubernetes does not validate the status code nor whether the URL is formed correctly in this Ingress:

metadata:

annotations:

nginx.ingress.kubernetes.io/permanent-redirect: "https://www.example.com"

nginx.ingress.kubernetes.io/permanent-redirect-code: "301"Kubernetes ignores any possible typo in the annotation despite the redirect failing silently.

In the Gateway API, the status code must be an integer and the scheme a string, with supported values for each:

spec:

rules:

- filters:

- type: RequestRedirect

requestRedirect:

scheme: "https"

hostname: "www.example.com"

statusCode: 301

Explicit separation of responsibilities

Modern Kubernetes footprints consist of varying responsibilities from assorted stakeholders. However, Ingress conflates infrastructure, security, and application concerns into a single resource. So in multi-team clusters, you may inadvertently overwrite another routing rule or embedded security-sensitive configuration, alongside app-level paths.

The Gateway API provides a role-oriented architecture:

- Infrastructure teams define Gateways and organizational boundaries.

- Application teams create Routes that attach to those Gateways within controlled scopes.

Decomposing like so that parallels real-world operational motifs, the Gateway API provides a safer, more predictable workflow in multi-tenant clusters.

Rich status model

Minimal status feedback in Ingress makes for a substandard troubleshooting process, making it hard to infer controller behavior and debug an Ingress. For example:

status:

loadBalancer:

ingress:

- ip: 203.0.113.1It gives no indication of, for example, a missing backend.

The Gateway API explicitly writes success and failure conditions to the resources:

status:

parents:

- parentRef:

name: prod-gateway

conditions:

- type: ResolvedRefs

status: "False"

reason: BackendNotFound

message: "Service 'billing-v2' not found in namespace 'finance'."

Modern routing semantics

Ingress’s intentionally designed narrow scope does not provide much room for advanced traffic management without annotations or vendor extensions. The Gateway API has native support for such features like multiple listeners per Gateway resource, traffic splitting, cross-namespace routing, and more potent matching rules. These abilities map to real-world architectures in preference to forcing controllers to patch around a minimalist API like Ingress.

A sustainable, extensible foundation for the ecosystem

Ingress’s shortcomings left controllers responsible for resolving gaps the API couldn’t model. It has led to convoluted codebases, divergent behaviors, and mounting technical debt. The Gateway API, at the specification level, has made the API itself extensible and forward-compatible; the spec evolves with community consensus in preference to contriving annotations or ad-hoc behaviors. This has diminished long-term maintenance burdens and paved the way for a healthier, more sustainable foundation for both open-source and vendor implementations.

Why switch to WSO2 Kubernetes Gateway?

WSO2 Kubernetes Gateway builds on the Gateway API’s approach to modern L7 traffic operations, runs on the edge, and extends into full lifecycle API management, not just a gateway. So if you are moving away from Ingress controller, WSO2 Kubernetes Gateway realizes a way to align ingress, governance, and AI traffic under a single, coherent platform that is fully open source.

Built on the Gateway API with the Envoy Proxy Data Plane

WSO2 Kubernetes Gateway provides extensive support for the Kubernetes Gateway API specification. It employs Envoy Proxy as the data plane; its xDS-based configuration allows configuration changes in near-real time, without requiring full reloads that characterize NGINX-based controllers. So you have a separation between a declarative Kubernetes control plane and a hot-reload-capable Envoy Proxy; you can adapt routing, TLS, and policies at runtime without interrupting in-flight connections.

WSO2 Kubernetes Gateway exposes routing logic, backends, and policies through CRDs. The control plane translates these resources through the xDS protocol into Envoy listeners, routes, and filters. Tying directly into the specification work the community is already standardizing, WSO2 Kubernetes Gateway avoids yet another proprietary configuration layer for teams to learn.

API management

WSO2 Kubernetes Gateway treats each route as an API entrypoint with lifecycle, consumers, and policies. It integrates with the broader WSO2 API platform; so the same gateway that terminates TLS and routes traffic also performs the following for specific APIs and applications:

- Authentication

- Token validation

- Subscription to APIs

- Rate limiting

- Emitting analytics events

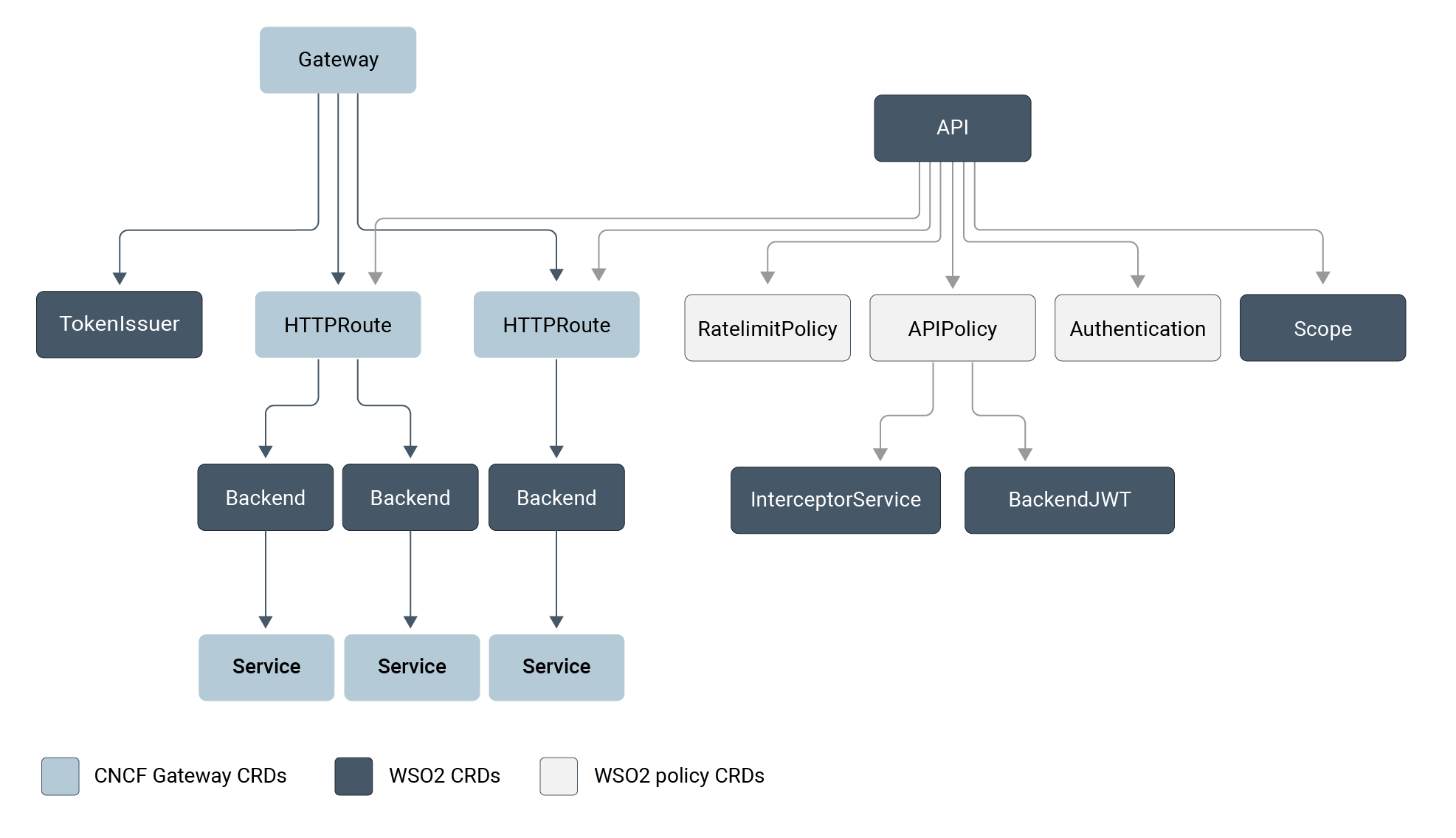

The CRDs within WSO2 Kubernetes Gateway, in addition to the Gateway API’s routing objects, describe APIs, backends, rate limits, and subscriptions declaratively.

Figure 1: Custom Resource Descriptions (CRDs) in WSO2 Kubernetes Gateway ensure compatibility with Kubernetes environments and a standardized means to extend and configure Gateway API objects.

Platform teams can define shared policies for authentication or throttling that apply across many APIs. Product or domain teams can still own the details of their routes. The resultant Ingress layer doubles as an API gateway, doing away with the topology where traffic flows from an Ingress controller into a separate API gateway for enforcement.

Some of the major gateway-specific features include:

- Deploying APIs using Kubernetes Custom Resources (CRs)

- Developing and deploying APIs using a set of REST APIs and tools

- Weighted routing for traffic distribution across multiple backends

Security and governance as first-class configuration

WSO2 Kubernetes Gateway assumes that every external entrypoint will comply with security and governance requirements. So it provides built-in mechanisms for authentication, authorization, and traffic control as part of the gateway configuration. Some typical patterns include:

- OAuth2 and JWT validation

- API keys

- Mutual TLS to backends

- CORS handling

You can configure each through Kubernetes resources instead of per-application code.

Governance is not limited to security checks. You can attach policies at different levels, including resource and API levels; this way, global invariants coexist with service-specific rules. For example, the following defines an operations-level policy, targeting GET type HTTP request methods to a specific route:

name: "Sample API"

basePath: "/sample-api"

version: "0.1.0"

type: "REST"

defaultVersion: false

endpointConfigurations:

production:

- endpoint: "https://dev-tools.wso2.com/gs/helpers/v1.0"

operations:

- target: "/uuid"

verb: "GET"

secured: true

scopes: []

rateLimit:

requestsPerUnit: 10

unit: "Minute"Egress management for LLM and AI APIs

WSO2 Kubernetes Gateway considers LLM and AI traffic as a first-class use case. Its egress API management capabilities have been designed specifically for applications that call external AI providers like OpenAI, Azure AI, Mistral, and also custom AI providers. The gateway centralizes concerns like credentials, rate limits, and provider-specific quirks for each application.

In practical terms, this means you can perform the following without modifying individual services:

- Declare outbound AI endpoints

- Attach backend-level rate limits

- Get analytics and observability into AI usage

This integration is rooted in the same policy and analytics stack used for inbound APIs. So platform teams get a unified observation into both sides of AI-heavy architectures:

- The APIs they expose

- The AI services those APIs consume

Such control and visibility provides definitive control over operations for organizations experimenting with agentic workloads or multi-provider LLM strategies.

Observability and analytics through the control plane

WSO2 Kubernetes Gateway’s analytics and observability stack provides comprehensive means to get insights into your products. You can capture detailed analytics through Choreo, ELK, and Moesif. And for observability and monitoring, you can configure logs, distributed tracing, and Prometheus metrics. An API-aware data model for observability and analytics means you get actionable insights, for example, teams consuming the most tokens from a specific provider.

It compliments the Gateway API status reporting we have already discussed. Gateway API statuses tell you whether a configuration has been accepted and programmed; analytics illustrate how that configuration behaves under real traffic. In tandem, you get a feedback loop where you can see both whether a route is valid and whether it is delivering the expected performance and usage motifs.

Operational fit for enterprise Kubernetes platforms and SREs

WSO2 Kubernetes Gateway has been engineered for clusters running multiple teams, environments, and regions, keeping high-level platform requirements adjacent to day-to-day SRE operations.

WSO2 Kubernetes Gateway's Envoy-based data plane allows for dynamic, zero-downtime updates. In tandem with how Gateway API separates resources, Kubernetes Gateway effectively contains any presumed ramifications of developer changes, preventing a misconfiguration from destabilizing the entire platform. SREs gain deep observability and native traffic splitting capabilities (like Canary, Blue-Green deployment strategies) without complex third-party add-ons.

The data plane scales horizontally with standard Kubernetes primitives. You can deploy and run WSO2 Kubernetes Gateway on any Kubernetes-based infrastructure, from on-premises to self-managed, across different clusters and environments, satisfying isolation, compliance, and multi-region requirements. Since the platform is open source with enterprise support, you can choose between community adoption or enterprise subscriptions that include SLAs, long-term support, and more.

Wrapping up

The Gateway API solves the structural problems of the Ingress era; modern gateways implementing the Gateway API offer benefits that materially improve developer experience, operational safety, and resilience in your infrastructures. WSO2 Kubernetes Gateway makes those advantages readily available into a production-ready platform for routing, governance, and AI egress.