What is an AI Gateway? Key Benefits and Examples

- Derric Gilling

- Vice President & General Manager - API Platform BU, WSO2

- Matt Tanner

- Senior Director, Product Marketing - API Platform, WSO2, WSO2

Applications and systems using AI have exploded in popularity, with every company looking to integrate AI anywhere they can. This move toward AI-assisted and AI-powered products appears to be the future. However, early adoption is great, but gaps form quickly at scale.

For example, in 2023 OWASP began to publish the OWASP Top 10 for LLM Applications (updated again in 2025), which outlined ten common security flaws found in LLM-based applications. Remediating these flaws can be outside the scope of typical application developers integrating LLMs. To manage the security, governance, and operational hurdles arising from AI proliferation, the industry has adopted a core architectural pattern: the AI gateway.

Instead of leaving developers and teams using AI to fend for themselves with homegrown solutions, AI gateways come out of the box with what teams need to build LLM-based applications and AI endpoints effectively. For most organizations using AI within their products or selling AI-based services, an AI gateway can be a massive help.

In this blog, we will look at the basics of AI gateways, how you can get started using one, best practices, and much more.

Introduction to AI gateways

AI gateways operate similar to API gateways. In fact, many of the best solutions, like the WSO2 AI Gateway, are built on top of API gateway technology and extended to meet the needs of this more recent technology.

Many of the ways that API gateways are used to secure and manage traditional APIs are also needed for AI APIs. However, AI APIs are more dynamic, going beyond the bounds of the typical defined and predictable API request and response. This introduces unique concerns:

- How do you control the cost of a request in terms of tokens (and the resulting internal cost)?

- How do you keep an LLM from responding with sensitive data that a user should not have access to?

- How do you stop a malicious prompt designed to divulge an LLM’s system prompt?

These are the types of challenges that AI gateways aim to solve.

Defining an AI gateway

AI gateways are middleware platforms that integrate, deploy, and manage AI models and services in enterprises. They provide a single place for teams to seamlessly integrate multiple AI models and services.

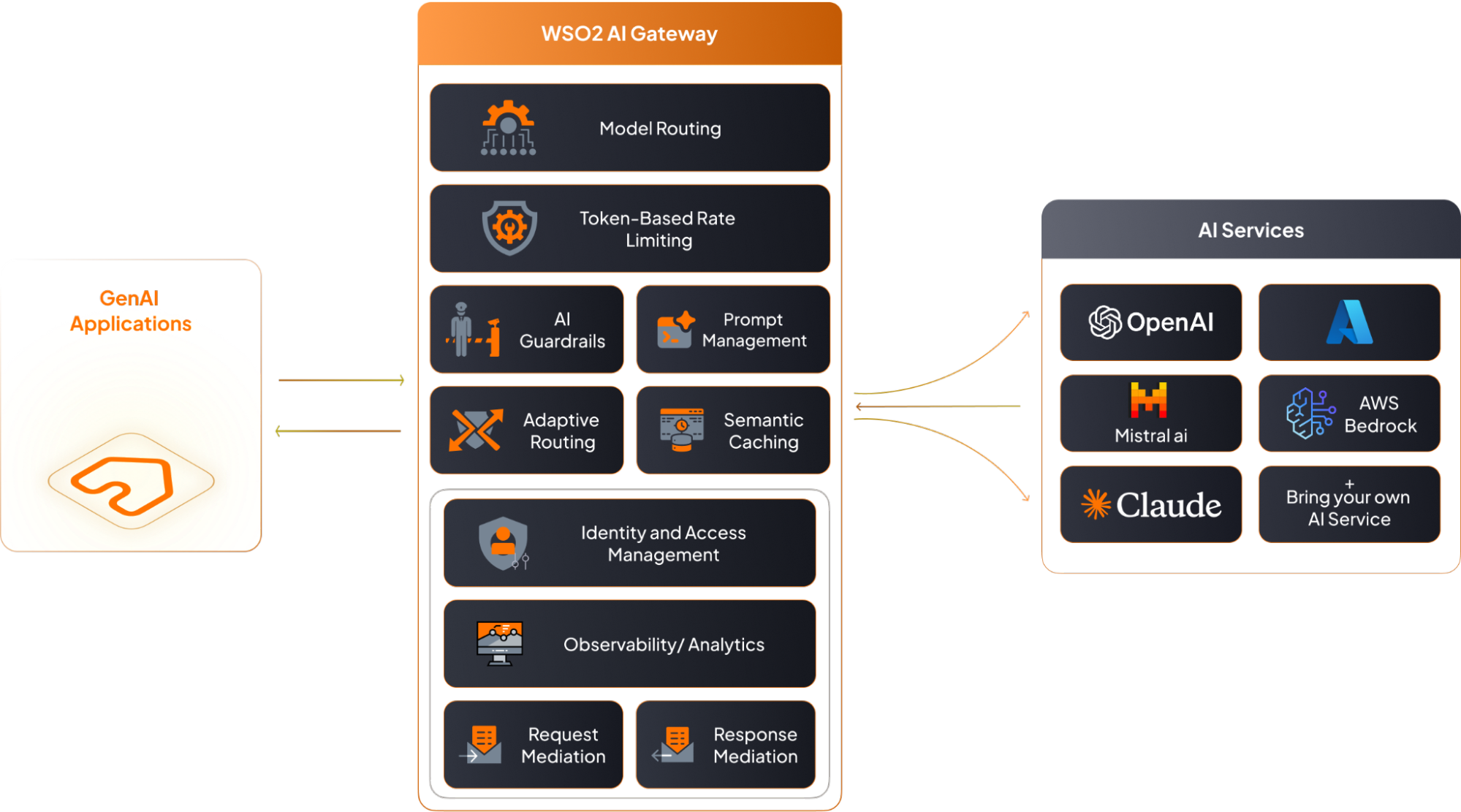

Figure 1: AI architecture that includes WSO2’s AI Gateway

By centralizing AI model interactions, an AI gateway supports:

- Enforcing governance and security policies across AI systems.

- Securing data flow between backend services and end-user applications.

- Creating a unified control plane that automates resource management and cost control.

AI gateway vs. API gateway

It is important to distinguish between both AI gateways and API gateways. As we already mentioned above, much of the core functionality is needed for both platforms and many AI gateways are AI-specific functionality bolted onto existing API gateway tech. While both technologies are essential, it's important to understand the primary difference.

| Gateway Type | Primary Function | Core Focus |

| API Gateway | Single entry point for client requests, managing routing, rate limiting, authentication, and load balancing for standard backend services. | Traditional RESTful API management and security. |

| AI Gateway | Extends API management capabilities to specialize in deploying, integrating, and orchestrating AI models and services, focusing on AI deployments and resource automation. | Operationalizing and integrating AI models, ensuring accuracy, performance, and enterprise-grade governance for AI. |

Practical applications of AI gateways

AI gateways are used in various industries to streamline AI adoption and integration supporting AI deployments and AI workloads. Imagine a developer creating a chatbot backed by an LLM. Directly connecting the UI to a backend AI service (like an OpenAI chat endpoint) can create security and cost risks.

Instead, the AI gateway sits in between this interaction (which you can see clearly in Figure 1 above).

- The GenAI application connects to the AI gateway.

- The AI gateway acts as the intermediary, accessing the underlying LLM for the user.

- The gateway enforces configured guardrails and efficiencies, such as cost control, prompt guardrails, and semantic caching.

This enables organizations to build AI-powered applications with enhanced security and compliance, managing access and request routing via a single entry point. The result is an AI system focused on optimal performance, security, and request throttling.

Benefits of an AI gateway

The largest pain points in deploying production-ready AI revolve around security, compliance, and cost management. AI gateways specifically expedite rolling out AI at enterprise scale in these three areas.

Security and compliance

Security and compliance for LLMs and AI interactions is quite complex. With MCP, RAG, and the ability for AI platforms to connect to outside data sources for context (including some having direct access to sensitive internal info) security becomes very nuanced. AI gateways provide centralized access controls and automated security policies for AI data traffic ensuring regulatory compliance and security infrastructure. Some particular areas of benefits that AI gateway provide include:

- Access control: They use AI backend security to handle API keys and network activity monitoring, restricting access and enforcing strong encryption protocols protecting sensitive data.

- Guardrails: AI gateways detect and block malicious activity via AI guardrails. This includes anomaly detection and deep packet inspection to provide enhanced security.

- Data scrubbing: They help maintain regulatory compliance by scrubbing sensitive data (PII) and filtering content before AI processing supporting data masking and virtual keys.

Cost management and optimization

AI gateways help organizations optimize AI costs by managing token usage and API access providing cost control and management. Without an AI gateway, this becomes particularly hard since AI APIs do not easily fit into the box of traditional APIs in terms of costs per API call and rate limiting.

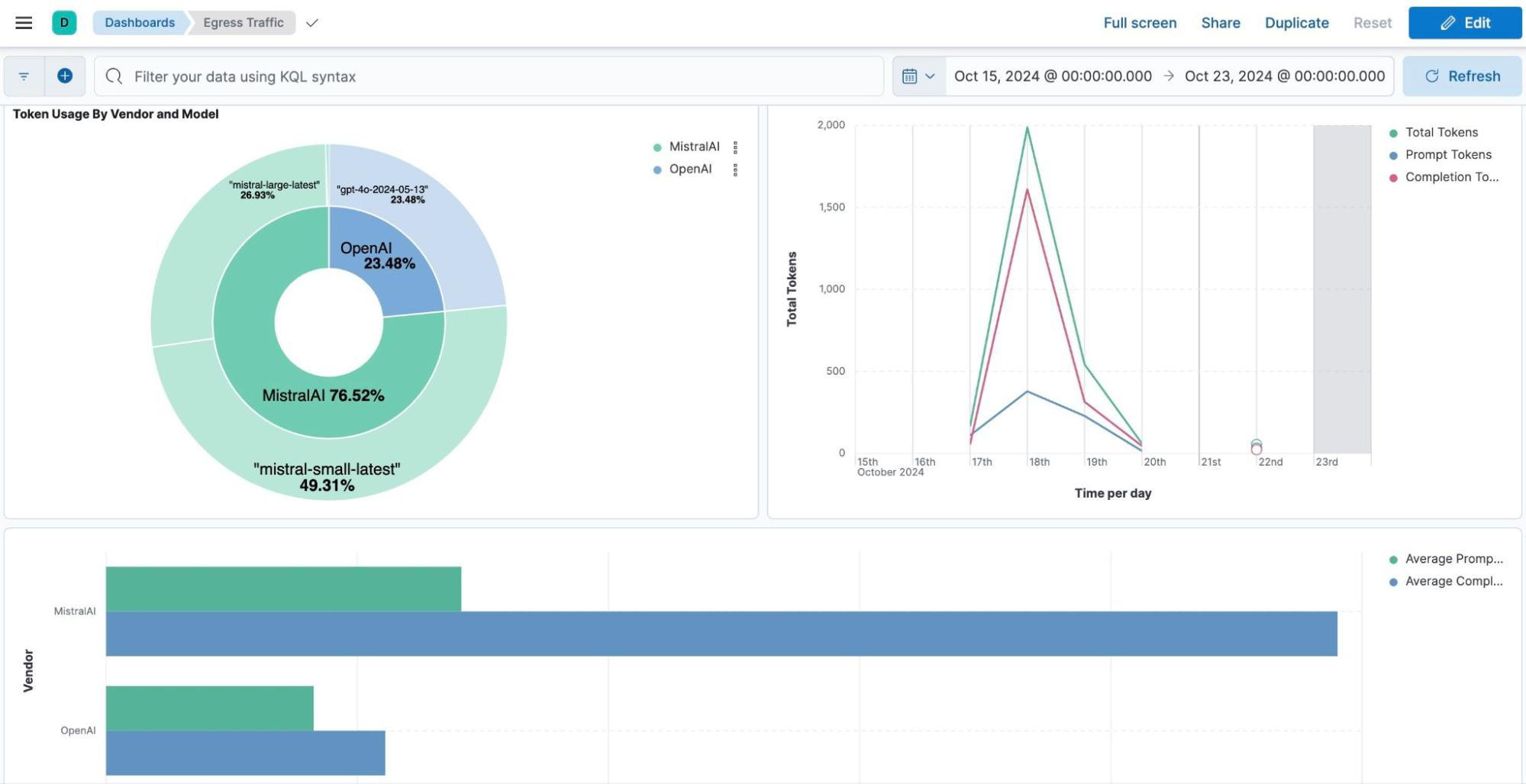

Figure 2: WSO2 dashboard showing AI provider and model spend

By adding an AI gateway into the mix, it enables organizations to monitor usage and detect anomalies optimizing AI performance and security. WSO2’s rate limiting capabilities, for example, allow you to limit consumption based on token counts rather than just request volume. This is important since token usage is the better way to control AI usage where rate limiting based on calls is not as useful. They also provide detailed logs and metrics reporting, which can help organizations to more accurately optimize costs.

Business benefits of AI gateways

Although there are plenty of technical benefits to AI gateways, implementing one also delivers measurable strategic value for business leaders supporting AI adoption and innovation as well. For the business, AI gateways support three main areas that are of concern:

- Risk management: AI gateways help manage many of the risks of AI usage and adoption. Allowing teams to unlock AI-driven innovation while making sure that enhanced security and compliance is in place.

- Governance: They support compliance and governance that is critical to enterprise AI adoption. Most AI gateways can help with providing role-based access control and security enforcement required at the governance level.

- Acceleration: AI gateways enable organizations to accelerate AI adoption with proper observability, security, and governance. Developers can worry less about the details, since the AI gateway can handle many of the concerns, and businesses can focus on growth and optimization of AI-enabled products and services.

Advanced capabilities of AI gateways

Enterprise rollouts of AI require more resilience and capabilities than simply supplying a basic AI-backed chat interface. For more advanced use cases, AI gateways provide advanced features like semantic caching, model failover, prompt management, and data masking optimizing AI performance and security. Three of the most used advanced capabilities include:

- Semantic caching: Unlike standard caching, semantic caching reduces latency and cost by serving cached responses to semantically similar questions, rather than requiring exact text matches.

- Prompt management: Features like prompt management allow you to manage system prompts and templates outside of application code. You can use prompt decorators to modify requests on the fly.

- Multi-model routing: They offer customizable load balancing and multi-model routing, allowing you to distribute traffic across models or set up failover logic to ensure high availability if a provider goes down.

Conclusion

If you're using AI within your applications, AI gateways are essential. For enterprise AI adoption they offer essential components like enhanced security, compliance, and governance specific to AI deployments and AI workloads. Just like you wouldn't run APIs without proper API management in place, AI gateways offer this same benefit to AI.

With platforms like WSO2's AI Gateway, AI in the enterprise doesn't need to be complex to scale, secure, and manage. Get started today by checking out our AI gateway docs or chat with our team about how an AI gateway can help with your AI efforts.