What is an API Gateway? Fundamentals, Benefits, and Future Trends

- Matt Tanner

- Senior Director, Product Marketing - API Platform, WSO2, WSO2

If you're working with APIs, chances are that the term "API gateway" has popped up in a conversation or web search at least once. Although they are not new, many developers that are new to creating APIs (or deploying them into a production environment) haven't heard of them or don't fully understand what the technology does. In the most simple terms, an API gateway is a single entry point where API traffic comes in and is then proxied to your actual APIs. The gateway handles all the nuances around securing and scaling APIs.

In this article, we will look at all of the details regarding API gateways, including how API gateways work, how they fit into your current architecture, key features, and much more. Let's begin by elaborating further on exactly what an API gateway is.

What is an API gateway?

An API gateway is a core piece of creating production-ready APIs. In a typical setup, you would have a front-end, which could be a web app, mobile app, desktop app, etc. and then this front-end app would make a call directly to your APIs for basic CRUD (create, read, update, and delete) functionality as well as more advanced stuff, like processing a checkout transaction.

Now, when we work in development, especially in the early phases, we may call our API directly from the front-end. But, when we start to transition more towards making it production ready, we need to think about other things such as:

- "How do I make sure that my API is secure and can only be accessed by authorized users?"

- "How can I prevent users from abusing the API by sending too many API requests too quickly?"

- "How do I make sure that my APIs can handle traffic at scale?"

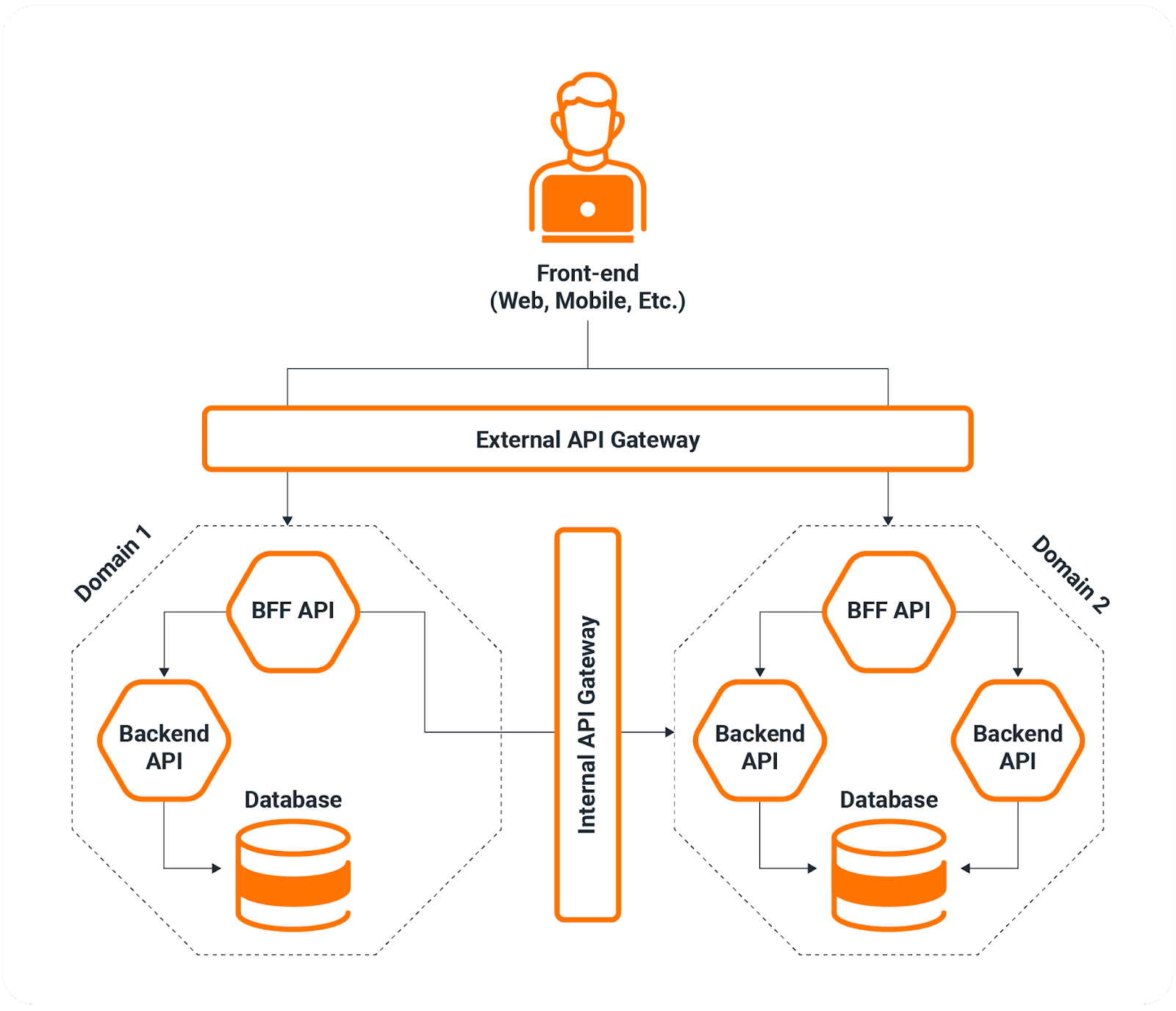

Of course, these are three out of hundreds, even thousands, of questions you may ask as you make the move to production. The answer to all of the above questions and more is an API gateway. The API gateway acts as a single point of entry from your front-end to all of your backend services, handling everything to do with security and scalability for the APIs. In the diagram below, you can see a simple breakdown of where the API gateway fits into the equation:

Figure 1: API gateway architecture overview

As you can see, traffic is routed through the gateway. The gateway then applies different security mechanisms, such as rate limiting and quotas, creating and managing API keys for authentication and authorization, and can also help with traffic management and load balancing to ensure that APIs remain performant.

How do API gateways work?

To elaborate further on the previous section, we already know that API gateways act as the single entry point for our API services, but let's dive deeper into the technical mechanics of how they actually operate.

At a technical level, an API gateway functions as a reverse proxy that sits between clients and your backend services. When a request comes in, the gateway intercepts it and performs a series of operations before routing it to the appropriate backend service. This process typically happens in milliseconds, but involves several critical steps.

Request processing and routing

When a client makes an API call, the gateway first validates the incoming request. This includes checking the request format, verifying the API endpoint exists, and ensuring the request method (GET, POST, PUT, DELETE, etc.) is allowed. The gateway then uses routing rules to determine which backend service should handle the request. These routing rules can be based on various factors like URL path, HTTP headers, query parameters, or even the content of the request body.

For example, a request to /api/v1/users might be routed to your user management microservice, while /api/v1/orders gets routed to your order processing service. This intelligent routing allows you to maintain a clean, consistent API structure for your clients even if your backend is composed of dozens of different APIs and microservices.

Security implementation

Security is where API gateways do a large lift for developers and security teams. Rather than implementing authentication and authorization logic in every single microservice, the gateway handles this access control centrally. This is far more maintainable and secure than duplicating security code across multiple services, which then becomes a nightmare to maintain.

Most gateways typically support multiple authentication methods including API keys, OAuth 2.0, JWT tokens, and mutual TLS. When a request arrives, the gateway validates the credentials before the request ever reaches your backend services. If the credentials are invalid or missing, the request is rejected immediately, protecting your backend from unauthorized access.

But authentication, the who you are, is only part of the security story. The gateway also enforces authorization policies, the ones that determine also what you're allowed to do. For instance, a basic user might only have read access to certain endpoints, while an admin user has full CRUD permissions. The gateway checks these permissions before routing API requests to the appropriate service.

Traffic management and rate limiting

One of the most critical functions of an API gateway is protecting your backend services from being overwhelmed by too much traffic, whether that traffic is legitimate or malicious. This is accomplished through rate limiting, quotas, and throttling policies.

Rate limiting controls how many API requests a client can make within a specific time window. For example, you might allow 100 requests per minute from a single API key. If a client exceeds this limit, the gateway returns a 429 (too many requests) response without bothering your backend services. This prevents a single client from monopolizing your API resources and protects against denial-of-service attacks. In a similar way, quotas can also be enforced, which generally look at usage over a longer time window, such as allowing a user to have 1,000 requests per month.

Throttling is similar but slightly different—it controls the rate at which API requests are processed rather than simply counting them. If your backend can only handle 1,000 requests per second across all clients, the gateway can queue or reject additional API requests to prevent overload. These mechanisms create the foundation for most of the traffic management that the gateway performs.

Load balancing and failover

API gateways also play a crucial role in distributing traffic across multiple instances of your backend services. If you're running three instances of your user service for redundancy and scalability, the gateway can distribute incoming client requests across all three using various load balancing algorithms like round-robin, least connections, or weighted distribution.

Additionally, the gateway monitors the health of your backend services. If one instance becomes unresponsive or starts returning errors, the gateway can automatically stop routing requests to that instance and redirect it to healthy ones. This failover capability is essential for maintaining high availability of API services.

Response processing

The gateway's job doesn't end once it forwards a request to your backend. It also processes the response before sending it back to the client. This might include transforming the response format, adding or removing headers, caching responses to improve performance, or aggregating responses from multiple backend services into a single response for the client.

The architecture of API gateways

Now that we've looked closely at how the gateways work, we also want to look a bit deeper at how they fit into the architecture of a modern API service. Overall, an API gateway is just one component within an API platform or API management setup, and understanding how these pieces fit together is crucial for implementing a resilient and scalable API solution.

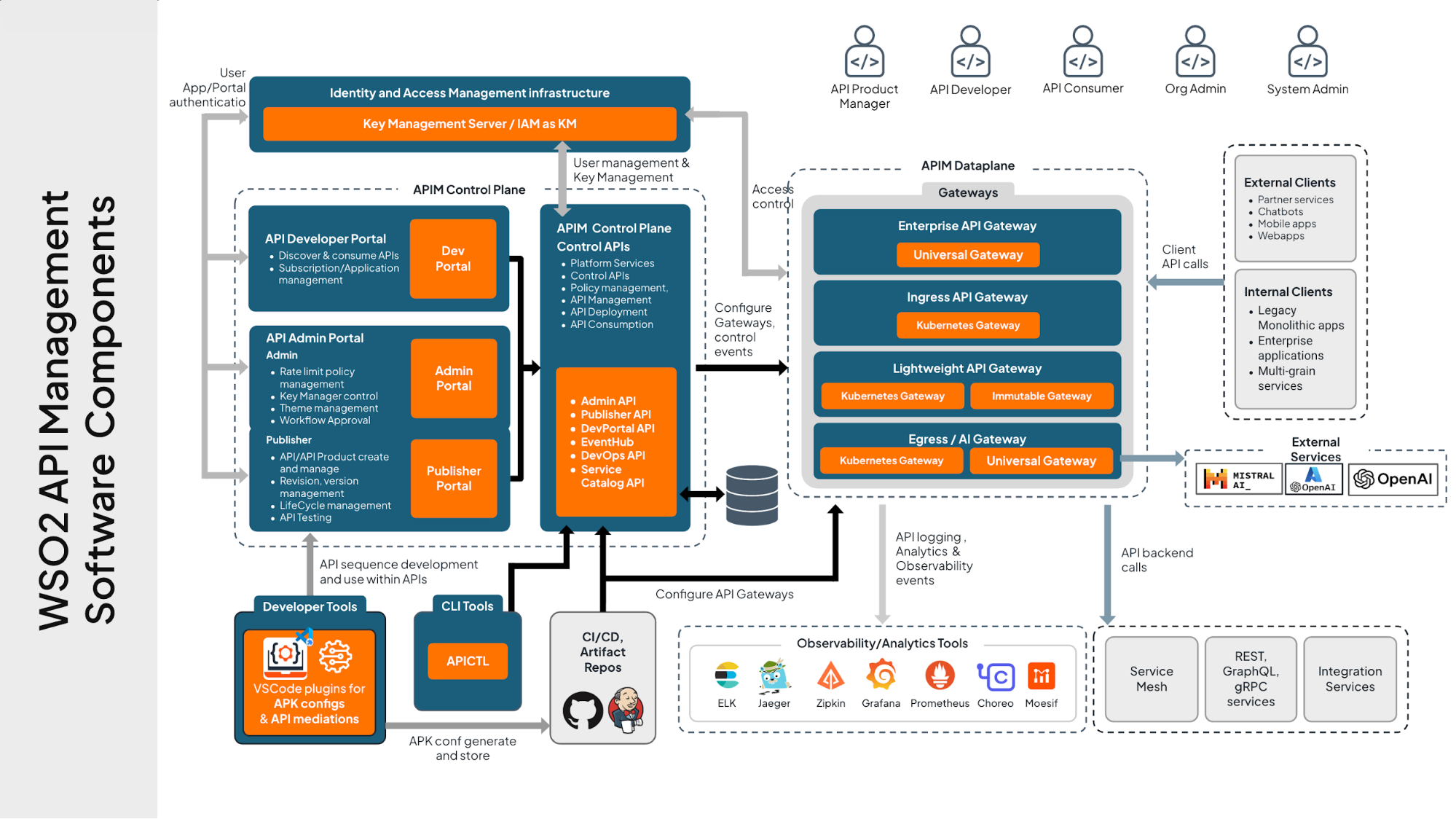

Figure 2: WSO2 API management detailed architecture

The data plane

The API gateway itself is what we usually refer to as the data plane. This is where the actual runtime traffic flows and where most of the real-time work happens. The data plane handles request processing, policy enforcement, traffic routing, security validation, and all the other operations we discussed earlier. It's optimized for speed and throughput because it sits directly in the path of every API call.

In production environments, you'll typically run multiple instances of the data plane for high availability and load distribution. Modern API gateways like WSO2 API Gateway are designed to scale horizontally, meaning you can add more gateway instances as your traffic grows.

The control plane

The control plane is where you configure and manage the data plane. This is typically a separate component that provides administrative interfaces for defining API policies, configuring routes, setting up authentication mechanisms, and monitoring API traffic. The control plane includes management APIs, admin portals, and developer portals that allow different stakeholders to interact with the platform.

Here's what typically lives in the control plane:

Configuration management: Where you define your APIs, set up routing rules, configure security policies, and establish rate limits. Changes made here are pushed to the data plane instances, often without requiring downtime.

Developer portal: Where API consumers can discover available APIs, read documentation, obtain credentials, and monitor their usage. This is crucial for API adoption.

Admin portal: Provides tools for monitoring API traffic, analyzing usage patterns, managing user access, and responding to issues.

Analytics and monitoring: Collects metrics and logs from the data plane, giving you insights into API performance, error rates, latency, and usage patterns.

In enterprise scenarios, API gateway architectures often span multiple regions or even hybrid cloud and on-premises environments. The control plane typically runs in a primary location and manages data plane instances deployed across different regions. This allows you to serve API traffic from locations closest to your users while maintaining centralized control.

API gateway benefits

Implementing an API gateway delivers benefits that impact many parts of the SDLC and operations. Some areas where the biggest benefits are seen include your team's development velocity, operational costs, and security posture. Here are some of the most talked about benefits of using an API gateway:

Simplified architecture: Instead of every backend service implementing its own security, rate limiting, and monitoring, the gateway centralizes these concerns. This is especially valuable in microservice architectures where you might have dozens of services. When you need to add a new authentication method, you configure it once rather than updating every service.

Enhanced security: By centralizing authentication and authorization, you create a single enforcement point that's easier to audit and secure. The gateway provides a security buffer between the public internet and your internal services, validating and sanitizing client requests before they reach your backend.

Improved performance: By using a gateway to implement response caching, teams can eliminate 80-90% of backend requests for frequently accessed data. SSL/TLS termination at the gateway offloads expensive cryptographic operations from your backend services. Load balancing with automatic failover ensures your APIs remain responsive under heavy load.

Better developer experience: A developer portal provides a single place to discover APIs, read documentation, and obtain credentials. The gateway's consistent interface makes APIs easier to consume regardless of the underlying technology stack.

Faster time to market: By providing ready-made solutions for authentication, rate limiting, and monitoring, an API gateway accelerates API development. New APIs can be deployed behind the gateway in minutes rather than days and developers can focus on functionality instead of the nuances of scalability and security.

Cost optimization: While a gateway requires infrastructure investment, it reduces overall costs by cutting development time on infrastructure code. The visibility into API usage helps identify optimization opportunities and prevents over-provisioning.

As gateways continue to evolve, the list of benefits continues to increase. The benefits of API gateway help both the technical side and the business side of an API-based business. With the latest wave of tech, especially AI, API gateway tech is becoming even more critical.

API gateway future trends

The API gateway landscape has continually evolved. SOAP, REST, gRPC, and even GraphQL have forced API gateways to adopt and support the latest tech. Within the past few years, this has been further expedited by the proliferation of AI APIs and the critical data and systems that they interact with. Here are the key trends shaping the future API gateways and API management as a whole:

AI-native API management: As AI becomes central to applications, gateways are evolving to support AI-specific workloads (usually in the form of an AI gateway). This includes token counting for LLM APIs, semantic caching of similar queries, and specialized rate limiting based on computational cost rather than just request count. We're also seeing gateways incorporate AI capabilities themselves, using machine learning to detect anomalous traffic patterns and automatically optimize routing.

GraphQL and API federation: GraphQL adoption is still growing (albeit more slowly than a few years ago), and modern gateways are adding comprehensive support including query validation and the ability to federate multiple GraphQL services behind a unified schema. This lets different teams own different parts of the API while presenting a coherent interface to consumers.

Service mesh convergence: In Kubernetes environments, the line between API gateways and service meshes continues to blur. The future likely involves tighter integration of these technologies for end-to-end observability and policy enforcement, with most gateway companies offering some type of support.

AI gateway vs. API gateway

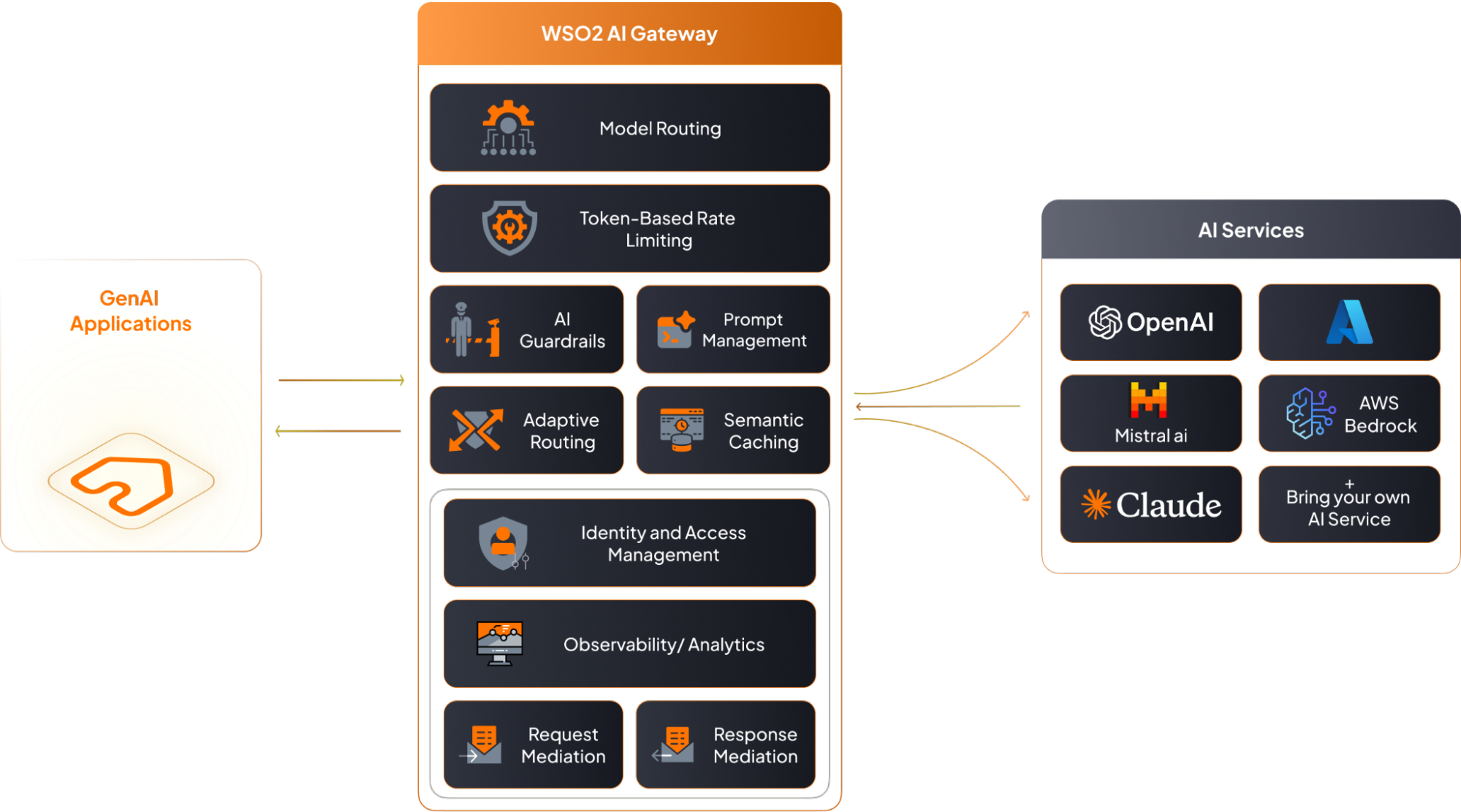

As more AI APIs pop up, traditional API gateway users are quickly seeing that more particular tech is needed to manage these APIs. This is where many have turned to AI gateways to handle this particular use case, and it's worth clarifying how they relate to traditional API gateways. An AI gateway is essentially a specialized API gateway optimized for managing AI and machine learning model APIs, particularly large language models like GPT-4, Claude, or Gemini.

Figure 3: WSO2 AI Gateway architecture

While AI gateways share core functionality with traditional gateways, features like routing, authentication, and rate limiting, they include specialized features like token-based rate limiting (since LLM providers charge per token, not per API call), prompt injection detection, semantic caching, and model routing with automatic fallbacks.

This then begs the question: if you're developing or consuming AI APIs, should you use an AI gateway or traditional API gateway? The answer, as per most technical decisions, depends on your use case. Most applications use a mix of traditional APIs and AI APIs. Many organizations find success using an enterprise API gateway that has added AI-specific capabilities. Modern platforms like WSO2 API Manager can handle both within a single platform, offering both a traditional API gateway and an AI gateway offering, helping teams avoid the operational complexity of managing separate gateways.

Conclusion

API gateways have evolved from simple reverse proxies into sophisticated platforms essential for modern API-first architectures. They provide the security, scalability, and developer experience features that turn internal services into production-ready APIs.

Whether you're building a startup application or managing enterprise-scale platforms, implementing an API gateway delivers immediate benefits: centralized security enforcement, protection against traffic spikes, detailed analytics, and a better experience for API consumers. The alternative—implementing these capabilities in each service—leads to duplicated effort and higher maintenance costs.

As APIs continue to be the foundation of modern software systems, the API gateway remains a critical component for delivering reliable, secure, and performant experiences. The investment pays dividends throughout the API lifecycle, from initial development through scaling to millions of requests per day.

API gateway FAQs

What's the difference between an API gateway and a load balancer?

While both distribute traffic, they serve different purposes. A load balancer focuses purely on distributing requests across multiple instances of the same service. An API gateway provides much broader capabilities including authentication, rate limiting, protocol translation, and request transformation. Think of a load balancer as focused on traffic distribution, while an API gateway handles security, management, and transformation.

Do I need an API gateway for a microservices architecture?

While not technically required, it's highly recommended. Without one, clients need to know about every microservice endpoint, authentication must be implemented in every service, and cross-cutting concerns get duplicated across your codebase. An API gateway provides a unified entry point that simplifies client integration and lets you evolve your backend without breaking existing clients.

How does an API gateway impact API latency?

An API gateway adds a small amount of latency, typically 1-10 milliseconds depending on the policies being applied. However, this is usually negligible compared to backend processing time, and gateways can actually reduce overall latency through response caching and efficient routing. The operational benefits far outweigh the minimal latency impact.

What's the difference between an API gateway and API management?

An API gateway is the runtime component that handles actual API traffic. API management is the broader platform that includes the gateway plus administrative tools, developer portals, analytics, and lifecycle management. Think of the gateway as the data plane (where requests flow) and API management as the complete solution including the control plane (where you configure everything).

Can I use multiple API gateways together?

Yes, this is common in large organizations. You might use an external-facing gateway for public APIs and an internal gateway for service-to-service communication, or deploy gateways in multiple regions for better performance. The key is ensuring consistent policies and having proper monitoring across all gateways.

Are API gateways only for REST APIs?

No, modern gateways support multiple protocols including GraphQL, gRPC, WebSocket, SOAP, and custom protocols. Many also provide protocol translation, allowing clients using one protocol to interact with backend services using a different protocol.

What happens if the API gateway fails?

Production deployments always involve multiple instances for high availability. If one instance fails, traffic automatically routes to healthy instances. Most enterprise deployments run gateways across multiple availability zones or regions. The gateway is designed to be stateless, so any instance can handle any request without coordination with other instances.