Adaptive Scaling of Microgateways on Kubernetes

As businesses start increasingly relying on Kubernetes, the need to scale services based on the business demand becomes more important. While the traditional methods like scaling based on the CPU and memory are important, expressing different business metrics in CPU and memory isn’t always straightforward. In this light, auto-scaling based on custom metrics in Kubernetes is going to be immensely helpful.

With the support for custom metrics, services can be scaled dynamically based on the request count or the error count of a particular service. This helps services respond smoothly to sudden bursts and traffic variations ensuring business continuity, also allowing resources allocated optimally among different services.

With its new release, the WSO2 Microgateway supports scaling based on custom metrics, enabling enterprises to scale the runtimes based on request count, error rate, requests in the pipeline and more.

During the webinar we will cover:

- Discussing the importance of selecting business-related metrics

- Explaining custom metric support in WSO2 Microgateway in detail

- Showing a demo on auto-scaling WSO2 Microgateway based on request count

Recommended for:

- DevOps Engineers

- Full-stack Developers

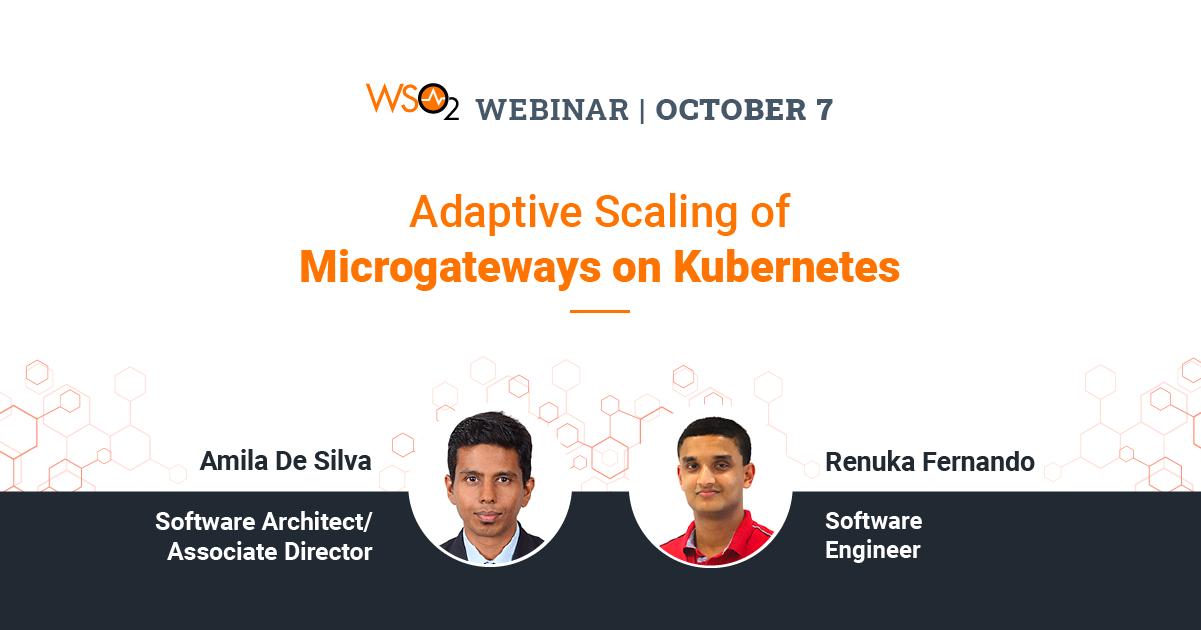

Presented by

Amila De Silva

Software Architect/ Associate Director

Amila De Silva is a Software Architect/Associate Director in the WSO2 API Manager team. He is also a member of the architecture team that overlooks research and development teams and drives innovation for WSO2 products. He has been a technical consultant specializing in enterprise integration technologies and middleware solutions. Amila has also designed solutions for Telcos.

Renuka Fernando

Software Engineer

Renuka Fernando is a Software Engineer in the WSO2 API Manager team. Renuka has experience with working in cloud technologies such as Docker, Kubernetes. His main focus is on research and development of WSO2 K8s API Operator.