Building AI Agents and GenAI Applications for the Enterprise

Build and scale AI agents and GenAI apps using the WSO2 Integration Platform’s low-code or pro-code development experience, and wide array of connectors to seamlessly integrate GenAI models, MCP servers, APIs, data, enterprise systems, and events, without adding complexity or lock-in.

Why AI agents fail without integration

AI agents and GenAI applications don’t fail because of models, they fail because they can’t reliably access real-time data, invoke business systems, enforce policies, or operate safely at enterprise scale.

Point-to-point integrations, fragmented APIs, and unmanaged prompts create brittle workflows, security risks, and stalled innovation.

From experimental AI to production-ready workflows

Trusted context is essential

Agents require governed access to APIs, events, and enterprise data.

Orchestration is critical

Real outcomes depend on coordinated workflows, not isolated prompts.

Guardrails are mandatory

Security, governance, and observability are required in production.

Scalability is non-negotiable

Successful pilots must evolve into resilient, reusable, cost-controlled systems.

Flexibility drives success

Teams must connect agents to the models, tools, and knowledge bases that best fit their strategy.

Without an integration platform purpose-built for AI, GenAI remains stuck in proofs of concept instead of delivering business value.

Real-world AI agent and GenAI scenarios

AI-driven enterprise assistants

Agents that retrieve data, invoke APIs, access MCP tools, and trigger workflows across CRM, ERP, and other line-of-business systems.

Autonomous business flows

Agents that reason, decide, and act, executing multi-step processes with human-in-the-loop controls.

GenAI-powered applications

Customer-facing apps that combine LLMs, APIs, events, and data services to deliver personalized experiences.

Operational intelligence

Agents that monitor events, detect anomalies, and take action in real time across distributed systems.

A unified architecture for AI-native integration

Using WSO2 to build

AI agents and GenAI apps

Build AI agents as governed, production-ready systems

AI agents must be orchestrated and governed within enterprise workflows. WSO2 enables teams to build agents that reason, access systems, and execute multi-step processes through structured integrations.

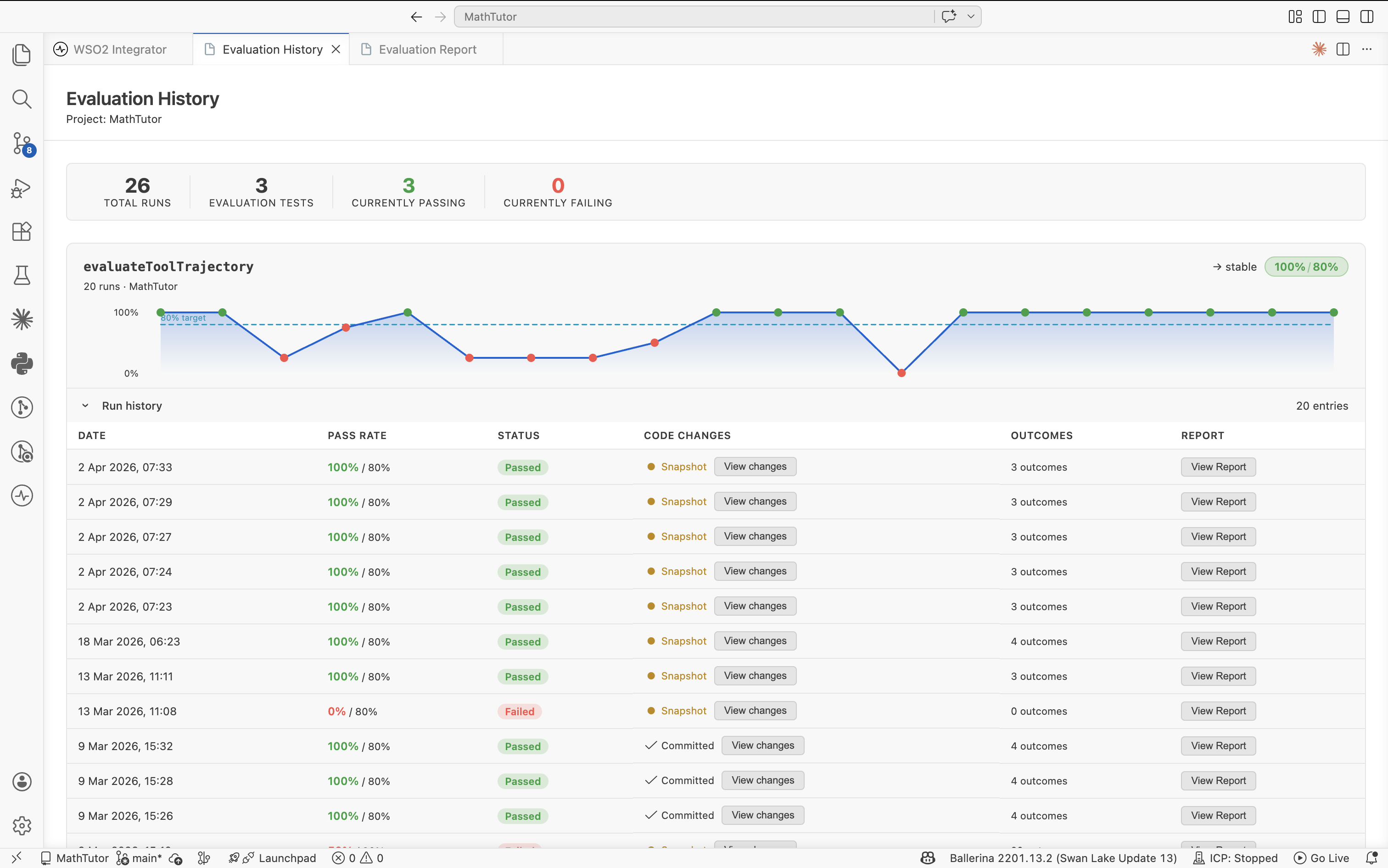

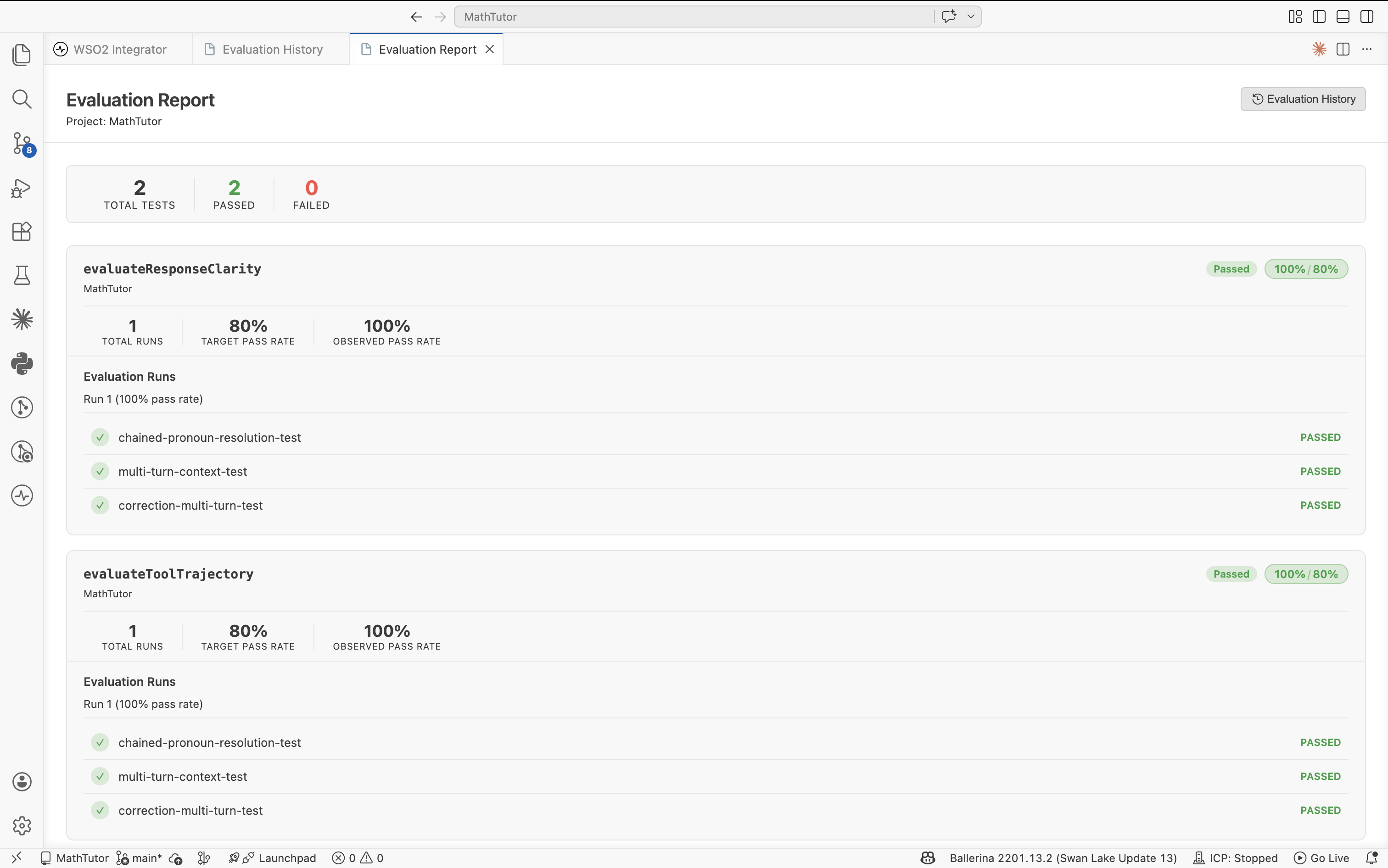

With native agent orchestration, tool creation from APIs and connectors, and real-time execution visibility, teams can move from experimental agents to secure, scalable, production-grade systems.

Create GenAI applications that combine models, data, and workflows

GenAI applications depend on seamless integration with enterprise data, APIs, and events. WSO2 enables teams to build apps that combine LLMs with RAG pipelines, structured data, and business logic for context-aware experiences.

Support for multiple model providers, vector databases, and reusable integration services ensures GenAI apps are fully integrated, enterprise-ready, and deliver measurable value.

Built to orchestrate real AI systems—not experiments

AI agents are not lightweight API consumers—they are reasoning systems that require context, coordination, and controlled execution. The WSO2 Integration Platform is purpose-built to orchestrate intelligent, multi-step workflows that unify AI models, enterprise APIs, event streams, files, and data into production-ready systems.

Unlike fragmented AI stacks, WSO2 provides native support for event-driven architectures, distributed runtimes, and cloud-native deployments—ensuring agents operate reliably at scale. Built-in agent execution visualization exposes reasoning steps, tool invocation chains, and decision loops in real time, giving teams the visibility required to debug, validate, and optimize agent behavior before it impacts production.

Integration as the control plane for AI

AI agents and GenAI applications must operate through structured, governed interfaces—not ad hoc integrations. WSO2 treats integration as the control plane for AI, enabling teams to design, expose, and orchestrate services that agents can reliably consume.

Whether interacting with SaaS platforms, internal systems, event streams, or data services, integrations are defined as durable, reusable contracts. This approach eliminates brittle point-to-point logic, enforces consistency, and enables scalable orchestration across distributed environments—turning integration into a strategic layer for AI execution.

One platform for rapid experimentation and deep control

Agentic systems demand both speed and precision. WSO2 unifies low-code and pro-code development into a single experience powered by the Ballerina programming language, enabling teams to move fast without sacrificing control.

Business users can visually compose workflows, while developers extend, customize, and optimize logic at code level—all within the same platform. AI-assisted development accelerates delivery with natural language code generation, transformation recommendations, and intelligent testing support. This eliminates the trade-off between experimentation and engineering rigor.

Built-in AI governance, not bolted-on guardrails

Production AI systems require governance at their core—not as an afterthought. WSO2 embeds security, identity, and policy enforcement directly into the integration layer, treating AI agents as first-class, governed entities.

With capabilities such as agent identity integration, AI gateway-enforced guardrails, and full-spectrum observability across metrics, logs, traces, and execution flows, teams gain complete visibility and control over agent behavior. From development through production, workflows remain secure, compliant, and auditable across cloud, hybrid, and on-prem environments.

Deep connectivity across AI, data, and enterprise systems

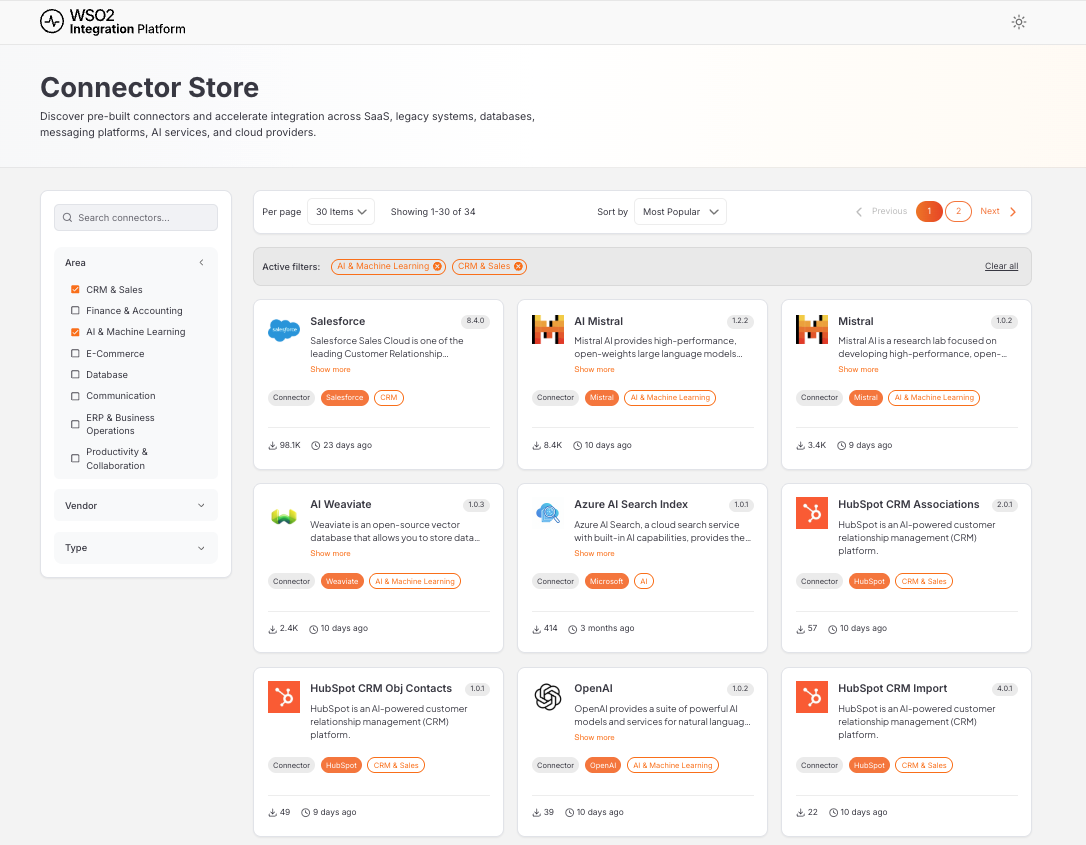

AI agents are only as effective as the systems and data they can access. WSO2 delivers comprehensive connectivity across APIs, event streams, files, databases, SaaS platforms, and enterprise systems—combined with native support for GenAI ecosystems.

Support for direct LLM interactions, RAG pipelines, multi-model providers, structured outputs, and vector databases ensures agents can combine real-time signals with historical context. Built-in connectors, reusable integration assets, and automated tooling eliminate brittle integrations and accelerate system onboarding as architectures evolve.

Open, portable, and built to overcome vendor lock-in

AI strategies cannot be constrained by proprietary platforms. WSO2's 100% open source core ensures full transparency, portability, and architectural control across any environment.

Run anywhere—on-premises, Kubernetes, multi-cloud, or managed iPaaS—while maintaining consistent runtime behavior and developer experience. Avoid lock-in, control your roadmap, and adapt as AI technologies evolve, without being tied to a single vendor's ecosystem.

Run it your way.

The only integration technology that is available as 100% open source self-hostable software, or as a SaaS.

Self-hosted

Full control over your stack. Deploy directly to your own servers, bare metal, or a private cloud environment. Your data never leaves your perimeter.

- ✓ Complete data sovereignty

- ✓ Air-gapped environment support

- ✓ Kubernetes, Docker, VM, or bare metal

- ✓ Bring your own CI/CD pipeline

SaaS

Zero infrastructure to manage. WSO2 handles provisioning, upgrades, scaling, and availability. Get started in minutes.

- ✓ Data plane anywhere you want

- ✓ Centralized control and observability

- ✓ Continuous updates, zero downtime

- ✓ Multi-region availability

Your vendor choice shouldn't determine your deployment requirements. Evaluate WSO2 in the environment that makes sense for you. No constraints, no artificial limitations.